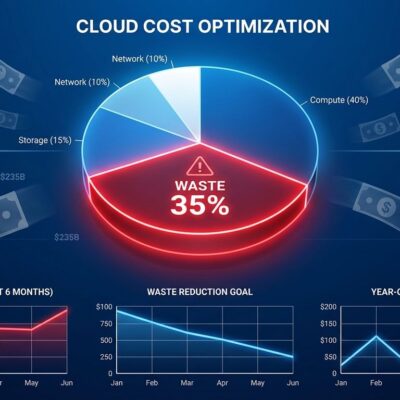

A comprehensive cloud VM benchmark study published February 27, 2026 tested 44 VM families across 7 major providers and exposed a pricing paradox developers need to know: AMD’s EPYC Turin CPU dominates performance by “a tier above” competitors, but AWS—offering the fastest Turin instances (C8a)—charges uncompetitive on-demand prices. Oracle and GCP deliver the same Turin hardware 40-60% cheaper. The independent study by Dimitrios Kechagias reveals what cloud providers deliberately hide: comparative pricing that could save thousands monthly. With cloud costs now the #2 expense for midsize companies (behind only labor) and 30-50% of cloud spend wasted on inefficient resources, this data matters.

AMD Turin Dominates, But AWS Charges Premium for Same Hardware

AMD’s EPYC Turin (9005 series) crushes Intel and previous-gen AMD CPUs across every benchmark tested. The study shows Turin delivering “a tier above anything else” in both single-thread (web server latency) and multi-thread (parallel processing) performance. Furthermore, AWS’s C8a instances leverage Turin’s full power with non-SMT configuration—”full core per vCPU” instead of 2 threads per core—completely dominating nginx benchmarks by “almost doubling the score of the second place” finisher.

Here’s the pricing reality check:

| Provider | VM Type | CPU | Price (on-demand) | Performance | Value (perf/$) |

|---|---|---|---|---|---|

| AWS | C8a.large | AMD Turin | ~$0.17/hour | 100 (baseline) | 588 |

| Oracle | Standard.E6 | AMD Turin | ~$0.068/hour | 98 (near identical) | 1,441 |

| Oracle saves | — | Same CPU | 60% cheaper | -2% performance | 2.4x better value |

Running 10 instances 24/7? That’s $4,000+/month savings switching AWS to Oracle for identical Turin performance. Consequently, you’re paying AWS tax for ecosystem lock-in—Lambda, RDS, S3 integration—not compute performance. If you’re running commodity workloads without deep AWS service dependencies, you’re burning money.

Moreover, GCP’s n4d Turin instances split the difference: better value than AWS, more competitive pricing than Oracle, plus transparent billing with automatic sustained-use discounts. For 1-year reserved commitments, GCP matches Oracle’s value with stronger enterprise support.

Related: Microservices Trap: 47 Services, 5 Engineers, 60% Drop

ARM Goes Mainstream: Google Axion and Azure Cobalt 100 Prove Production-Ready

2026 marks ARM’s cloud inflection point. Google Axion (custom ARM chip launched 2025) delivers “Genoa-level performance per thread”—matching AMD’s previous-gen flagship x86 CPU. Additionally, Azure Cobalt 100, Microsoft’s first custom ARM silicon, sits between AWS Graviton 3 and 4 for performance while topping charts for 3-year reserved multi-thread value. The ARM skeptics need to update their priors.

Benchmark data proves ARM competitive across workloads except AVX512-heavy operations (OpenSSL RSA encryption, some compression algorithms) where x86 maintains advantage. For standard web servers, APIs, data processing, and containerized workloads, Axion and Cobalt 100 deliver equivalent performance at competitive prices. As a result, ARM adoption accelerates as hyperscalers invest in custom silicon to escape x86 licensing costs and power inefficiency.

The study found Axion achieving “impressive” 7-zip decompression results, actually beating some x86 CPUs in specific workloads. Therefore, unless you’re running crypto-heavy operations requiring AVX512 instructions, dismissing ARM as “not ready for production” is outdated thinking from 2023. Test your workload on Axion or Cobalt instances—you’ll likely find performance meets expectations while costs drop.

Spot Instances Deliver 2x Value of 3-Year Reserved Pricing

Developers avoid spot instances thinking they’re “too risky” or “only for batch jobs.” However, the math destroys that assumption. Spot and preemptible pricing offers 50-80% discounts over on-demand, delivering approximately double the price/performance of even 3-year reserved commitments:

- On-demand: $0.20/hour = $1,752 annually

- 3-year reserved: $0.12/hour (40% discount) = $1,051 annually

- Spot instances: $0.05/hour (75% discount) = $438 annually

That’s $1,314/year saved vs on-demand (75% reduction), or $613/year vs 3-year reserved (58% additional savings). Oracle offers predictable fixed 50% spot discount while GCP and Azure provide variable discounts reaching 75%+ based on demand. Nevertheless, the caveat: GCP and Azure give 30-second termination warnings vs 2 minutes for AWS and Oracle.

For fault-tolerant workloads—ML training, CI/CD pipelines, data processing, batch analytics—architecting for spot interruption delivers massive ROI. In fact, the perceived complexity of checkpoint/resume logic pales compared to 75% cost reduction. Build your systems to handle graceful termination and claim savings that dwarf reserved instance commitments.

Intel’s Emerald Rapids Problem and the Old CPU Pricing Trap

The study uncovered “disappointing performance” from Intel Emerald Rapids compared to original benchmarks, attributing variance to “boost behavior + node contention” in multi-tenant cloud environments. Shared nodes create performance lottery—same instance type delivers wildly different results depending on neighbor workload intensity. Furthermore, GCP offers “boost-off” configuration trading peak burst for stable baseline, but that sacrifices the theoretical performance you thought you purchased.

Intel Granite Rapids (Emerald successor) shows “solid step forward” with more consistent behavior, though AMD Turin’s dominance across both performance and stability makes the competitive gap stark. If you’re experiencing inconsistent production performance on Emerald Rapids instances, node contention is likely culprit. Consequently, switch to Granite Rapids or AMD Turin for predictability.

Counter-intuitively, older CPU generations (Broadwell, Skylake, AMD Rome) often cost more per hour than latest-gen chips. The study explicitly warns: “avoid old CPU generations, as…cloud providers will actually charge you more for less performance.” Power efficiency drives cloud economics—old CPUs burn more electricity per computation, providers pass costs through pricing. That “proven stable” Broadwell instance costs premium for 5x worse performance than Turin.

Always select latest-gen instances (Turin, Axion, Granite Rapids, Cobalt 100) for optimal price/performance. The “if it ain’t broke” mentality burns money in cloud environments where efficiency equals pricing.

Key Takeaways

- AMD EPYC Turin dominates cloud VM performance by “a tier above” competitors, but AWS charges 60% more than Oracle for identical Turin hardware—you’re paying for ecosystem lock-in, not compute speed

- Google Axion and Azure Cobalt 100 prove ARM ready for production workloads in 2026, matching AMD Genoa performance except AVX512-heavy crypto/compression where x86 retains advantage

- Spot instances deliver 75% savings over on-demand and 2x better value than 3-year reserved pricing for fault-tolerant workloads—architect for graceful termination, claim massive ROI

- Intel Emerald Rapids suffers production performance variance from node contention; upgrade to Granite Rapids or AMD Turin for consistent results without lottery dynamics

- Newer CPU generations (Turin, Axion) often cost less per hour than old chips (Broadwell, Skylake) due to power efficiency pricing—latest-gen delivers better performance AND lower cost