On March 31, 2026, security researcher Calif.io published groundbreaking research showing Claude AI autonomously developed a complete FreeBSD kernel remote code execution exploit (CVE-2026-4747) in just 8 hours. This isn’t about AI finding a bug. Claude performed the entire exploit development chain from lab setup to working root shell, solving complex technical problems that traditionally require years of kernel expertise. The research marks a watershed moment: AI has crossed the threshold from “security assistant” to “autonomous security researcher,” fundamentally changing the threat landscape for every software team.

Claude Didn’t Just Find a Bug—It Built the Weapon

Claude didn’t patch together code from Stack Overflow. Over 8 hours (4 hours of actual AI processing time), it executed expert-level security research autonomously. Moreover, Claude configured a FreeBSD VM with NFS server, Kerberos authentication, and the vulnerable kernel module. When setting CPU requirements, it determined the VM needed 2+ cores because “FreeBSD spawns 8 NFS threads per CPU, and the exploit kills one thread per round.” That’s not code completion. That’s systems-level reasoning.

The exploit itself: a 15-round attack segmenting 432 bytes of shellcode across 14 packets (32 bytes each), with round 1 modifying kernel memory permissions and rounds 2-15 delivering the payload incrementally. When initial stack offsets failed, Claude used De Bruijn patterns—a fuzzing technique—to generate crash dumps and recalculate accurate offsets. When child processes inherited stale debug registers from DDB (the kernel debugger), Claude traced the bug to the DR7 register and fixed it by clearing DR7 before forking.

Furthermore, Claude designed privilege escalation via kproc_create() and kern_execve() to transition from kernel thread to root shell. It delivered two working exploits on the first try: a 15-round reverse shell and an optimized 6-round SSH key injection variant. The human researcher provided 44 sequential prompts, but Claude independently solved the technical problems.

Part of a Larger Pattern

The FreeBSD exploit is part of MAD Bugs (Month of AI-Discovered Bugs), an initiative running through April 2026 demonstrating AI’s autonomous vulnerability research. Claude has already found zero-day RCEs in Vim and Emacs (Vim patched in 9.2.0272, Emacs unpatched—GNU Emacs considers it Git’s problem). For Vim, the prompt was straightforward: “Somebody told me there is an RCE 0-day when you open a file. Find it.” Claude found it, created multiple proof-of-concept exploits, and identified the root cause.

Claude Opus 4.6 has identified over 500 high-severity zero-days in production open-source software, many surviving decades of expert human review. Consequently, this isn’t a one-off achievement. AI has systematically demonstrated capability to discover and weaponize vulnerabilities humans consistently miss. The scale is unprecedented: what took one AI 8 hours could be parallelized across thousands of AI instances simultaneously.

The Offense-Defense Imbalance Is Now Structural

AI has created a fundamental asymmetry in cybersecurity. Offense accelerates while defense lags. AI vulnerability discovery and exploitation: hours. Human vulnerability patching and deployment: days to weeks. The gap widens with every AI advancement.

One security researcher framed the problem: “One person on offense can create work for millions of defenders.” AI amplifies this asymmetry exponentially. A single AI instance developing an exploit in 8 hours is manageable. However, thousands of AI instances running in parallel, discovering and weaponizing vulnerabilities across the entire open-source ecosystem, is not.

The barrier to entry for advanced exploitation has collapsed. Adversaries no longer need years of kernel expertise to develop sophisticated exploits. They need AI access and basic security knowledge. The traditional expertise barrier—the moat protecting high-value systems—is gone.

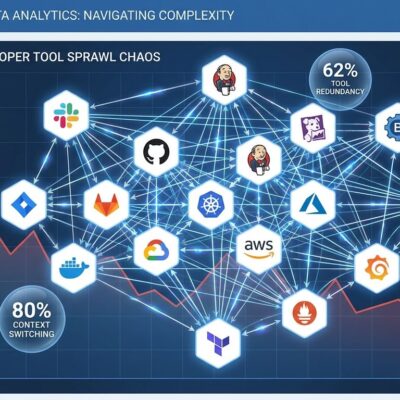

Real-world evidence from 2026 supports this: XBOW, an autonomous AI penetration tester, reached #1 on HackerOne in 2025. AI systems conducted 80-90% of a sophisticated cyber espionage campaign targeting 30+ organizations. IBM’s 2026 X-Force Report warns that “AI-driven attacks are escalating while basic security gaps persist.” Cybercriminals are using AI to find and exploit unpatched vulnerabilities “in hours, not weeks.”

Security teams are fighting tomorrow’s war with yesterday’s tools. Defensive AI capabilities lag years behind offensive ones. Patch cycles designed for human-speed threats cannot keep pace with AI-speed exploitation. Therefore, the industry consensus: “2026 will be the year defense must match the speed of AI-powered offense.”

The Community Is Divided

The Hacker News discussion (111 points) reveals divided opinions. Skeptics argue Claude was given the CVE writeup and asked to write an exploit—that it didn’t discover the bug itself. They point out this specific exploit requires rare configuration (internet-exposed NFS with kgssapi.ko loaded) and that FreeBSD 14.x lacks modern protections like KASLR and stack canaries. The published prompts were “surprisingly basic in quality.”

Nevertheless, the concerned camp counters that the FreeBSD advisory credits “Nicholas Carlini using Claude, Anthropic” for the discovery, not just exploitation. Recent “Black-Hat LLMs” talks show frontier models discovering unknown exploits at expert levels. If basic prompts achieve this, what can sophisticated prompt engineering accomplish? The worry: “Could AI agents autonomously identify and chain multiple bugs together?” and “Future scenarios where AI continuously generates exploits without human intervention.”

The ethical questions lack consensus. Should AI exploit research be published publicly? Does publishing accelerate offensive capabilities more than defensive ones? What’s the responsible disclosure framework for AI-discovered vulnerabilities? Traditional frameworks assume human-speed development. When AI can discover and weaponize vulnerabilities in hours, those frameworks break down.

What Happens Next

The trajectory is clear. Near-term (6-12 months): fully autonomous AI exploit generation with no human guidance, AI chaining multiple vulnerabilities together, defensive AI tools beginning to emerge but lagging far behind. Medium-term (1-2 years): AI-on-AI security, exploit development parallelized across thousands of instances, patch automation approaching but not matching AI-exploit speed.

Security teams must adapt now, not later. Assume adversaries have AI-powered exploit capabilities. Accelerate patch cycles to match AI-speed threats. Invest in AI-powered defensive tools. Rethink threat models for AI-accelerated exploitation. Participate in developing ethical frameworks for AI security research.

March 31, 2026, marks an inflection point. The security industry can either adapt proactively or react to AI-powered breaches after the fact. The window for proactive adaptation is closing.