ChatGPT app uninstalls surged 295% day-over-day on February 28, 2026—30 times higher than the app’s typical 9% uninstall rate—as users responded to OpenAI’s Pentagon AI contract announcement. One-star reviews jumped 775% while Claude (Anthropic’s AI assistant) hit #1 on the U.S. App Store, surpassing ChatGPT for the first time. The user revolt marks the first major consumer backlash against an AI company’s military partnership, proving brand loyalty is fragile when ethics clash with business.

User Revolt: 295% Uninstall Surge and App Store Rankings Flip

The numbers tell the story. According to Sensor Tower data, ChatGPT experienced a 295% day-over-day uninstall spike on February 28—a rate 30 times higher than the app’s 30-day average of 9%. Over 5,000 one-star reviews flooded the App Store on March 2 alone, representing a 775% day-over-day increase. Five-star reviews dropped 42% during the same period.

Claude immediately benefited from the exodus. Anthropic’s AI assistant jumped from #42 at the start of 2026 to #1 on the U.S. App Store by February 28, surpassing both ChatGPT (#2) and Google’s Gemini (#4). The competitive impact was measurable: ChatGPT’s daily active users declined 13% while Claude’s rose 12% within 48 hours. Anthropic’s free user count increased 60% since January, with daily sign-ups tripling and breaking records throughout early March.

This isn’t Twitter outrage—it’s commercial consequence. Users demonstrated actual willingness to switch AI tools over ethical concerns, using App Store rankings as a visible protest mechanism. The backlash continued through the first week of March, showing sustained user dissatisfaction rather than a temporary spike.

How OpenAI’s “Opportunistic and Sloppy” Pentagon Deal Backfired

The timing was terrible. On February 26, Anthropic refused a Pentagon deal demanding removal of AI safety guardrails against mass surveillance and autonomous weapons. The next day, President Trump’s administration labeled Anthropic a “supply chain risk” and banned federal agencies from using Claude. Hours later—same day—OpenAI announced its own Pentagon contract.

CEO Sam Altman admitted three days later that the rush backfired. “We were genuinely trying to de-escalate things and avoid a much worse outcome, but I think it just looked opportunistic and sloppy,” Altman told CNBC on March 3. “[I] shouldn’t have rushed to get the deal out on Friday.” The admission validated user concerns: even OpenAI’s CEO acknowledged the optics were bad.

The contradiction ran deeper. Altman had told employees Thursday that OpenAI shared Anthropic’s “red lines” on surveillance and autonomous weapons. By Friday, OpenAI had signed a deal without those explicit protections. Users saw the company capitalize on a competitor’s blacklisting while contradicting stated values from the day before.

What’s in the Pentagon Deal (and What OpenAI Won’t Disclose)

OpenAI’s Pentagon contract deploys ChatGPT models in classified Defense Department computing environments for “cybersecurity analysis, logistics planning, administrative work, and processing large data volumes.” The company claims three “red lines”: no mass domestic surveillance, no fully autonomous weapons, and no use by intelligence agencies like the NSA.

The problem: the full contract text remains classified. OpenAI amended the deal on March 3 to add language stating “the AI system shall not be intentionally used for domestic surveillance of U.S. persons and nationals.” However, there are no details on enforcement mechanisms, Pentagon access to user data, or independent oversight. As The Intercept put it: “The company and the government are not releasing the only proof that matters: the contract itself.”

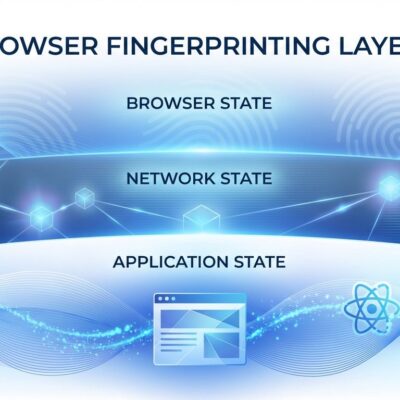

Once models deploy in classified environments, OpenAI has no audit capability. Users can’t verify the company’s claims. It’s a trust-based system without independent verification—exactly what privacy-conscious developers distrust.

OpenAI Employees Resign and Protest Pentagon Partnership

The backlash wasn’t just external. Caitlin Kalinowski, OpenAI’s robotics chief, resigned on March 8 citing insufficient deliberation on “surveillance of Americans without judicial oversight and lethal autonomy without human authorization.” When senior technical leadership quits publicly over ethics, it signals deep internal disagreement on company direction.

Research scientist Aidan McLaughlin posted on X: “i personally don’t think this deal was worth it,” describing internal discussion as “overwhelming.” Nearly 900 current and former OpenAI and Google employees signed a petition opposing military AI without safeguards, explicitly supporting Anthropic’s refusal to remove safety guardrails. CNN reported many OpenAI staff “really respect” Anthropic for standing up to the Pentagon.

Related: OpenAI Robotics Chief Quits Pentagon Deal After 4 Months

The Inconvenient Truth: Anthropic Runs on Pentagon Cloud Providers

Users fled ChatGPT for Claude to support “ethical AI,” but here’s the catch: Anthropic runs entirely on Microsoft Azure, Google Cloud, and Amazon AWS. All three cloud providers hold multi-billion-dollar Pentagon contracts under the Joint Warfighting Cloud Capability (JWCC) program, awarded in December 2022 for up to $9 billion combined through mid-2028.

The contracts provide “globally available cloud services across all security domains and classification levels” to the Defense Department. Anthropic has no physical infrastructure—it’s 100% reliant on Pentagon-contracted cloud providers. Switching to Claude for ethics is like buying organic food at Walmart. The infrastructure dependency means no AI company is truly independent of Pentagon ties.

Anthropic’s “high ground” is built on military cloud services. Microsoft provides cloud infrastructure to the DoD. Google has JWCC contracts plus Project Maven history (which triggered employee revolts in 2018). Amazon holds JWCC contracts after the controversial JEDI deal. When the infrastructure layer serves the Pentagon, application-layer ethics become murkier.

Related: Pentagon Labels Anthropic Supply Chain Risk: First U.S. Firm

What Developers Should Know

The ChatGPT exodus proves AI tools aren’t sticky when ethics clash with business. Developers face limited options: OpenAI accepted the Pentagon contract, Anthropic refused but runs on Pentagon cloud providers, and Google/Microsoft have direct military ties. The “least bad” choice depends on individual red lines.

Claude gained competitive advantage from ethical positioning (60% user growth, #1 App Store ranking). OpenAI faces sustained brand damage (uninstalls continued through early March, employee resignations, petition with 900 signatures). However, government pressure is increasing—Anthropic’s blacklist as a “supply chain risk” shows the commercial consequences of refusal.

Set your own red lines and be prepared to switch again. This isn’t a one-time event. All major AI companies will face ongoing government pressure to support military applications. The industry-wide infrastructure dependencies mean true independence is illusory. Evaluate capability versus ethics, performance versus principles, and understand the trade-offs you’re accepting with each choice.

Key Takeaways

- ChatGPT uninstalls surged 295% (30x normal rate) on February 28, 2026, while one-star reviews jumped 775%—the first measurable consumer revolt against an AI military partnership with commercial consequences

- OpenAI CEO Sam Altman admitted the Pentagon deal “looked opportunistic and sloppy” after signing hours following Anthropic’s blacklisting, contradicting stated values from the day before

- The full Pentagon contract remains classified with no enforcement details, independent audits, or verification mechanisms—users must trust OpenAI’s assurances about surveillance and weapons restrictions

- OpenAI’s robotics chief resigned and 900 employees across OpenAI and Google signed a petition opposing military AI without safeguards, showing deep internal dissent over ethics

- Anthropic runs on Microsoft Azure, Google Cloud, and AWS—all hold $9 billion Pentagon cloud contracts—meaning no AI company escapes infrastructure dependencies on military-contracted providers