Apple ships iOS 26.4 with Gemini-powered Siri this week, deploying advanced AI assistant capabilities across 2.2 billion devices—the largest rollout in history. But the real story isn’t the technology. It’s the partnership: Apple chose Google over OpenAI and Anthropic after negotiations collapsed over pricing and competitive concerns. For a company built on vertical integration and privacy, outsourcing Siri’s “brain” to its biggest rival is a strategic admission that Apple can’t build competitive AI alone.

Why Google Won the $1B Deal

Apple didn’t want this partnership. The company initially resisted third-party AI models, preferring to build everything in-house. But after Siri’s repeated failures to keep pace with Google Assistant and Alexa, Apple quietly opened negotiations with three AI providers: Anthropic, OpenAI, and Google.

Anthropic priced itself out immediately. The company demanded “several billion dollars annually over multiple years” according to sources familiar with the negotiations. Apple walked. OpenAI refused for different reasons: competitive concerns. Both companies are developing hardware products, creating strategic conflicts that made partnership untenable.

Google won by default—and by offering exactly what Apple needed. At $1 billion per year, Google’s terms were more reasonable than Anthropic’s demands. More importantly, Google brought mobile optimization expertise from years of Android development and willingness to white-label Gemini with no visible Google branding. A favorable court ruling on Apple’s Google Search deal made the partnership legally viable. Apple got its AI upgrade without admitting defeat publicly. Google got distribution to 2.2 billion devices.

The Privacy Paradox

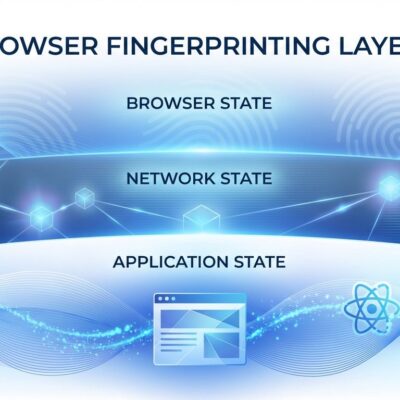

Apple markets iOS 26.4 as “privacy-first AI.” The architecture is impressive: a three-tier processing model routes 60% of queries on-device via Apple’s Neural Engine, 30% to Apple’s Private Cloud Compute servers with end-to-end encryption, and only 10% to Google’s infrastructure for complex reasoning tasks. Private Cloud Compute features stateless computation, no data retention, and verifiable transparency through open-source tools.

But here’s the problem: 10% of queries still leave Apple’s control. They’re “anonymized” before reaching Google’s servers, but they’re processed outside Apple’s privacy architecture. Google’s Gemini collects conversations, allows human review of some queries, and retains data for up to three years even after deletion. For a company that built its brand on keeping user data private, routing any percentage of queries through a competitor’s data infrastructure undermines that promise.

The tradeoff is capability versus privacy. Simple queries like “set a timer” process on-device. Complex multi-step tasks like “find yesterday’s beach photo and send to Suzanne” require Gemini’s reasoning on Google Cloud. Users gain functionality but sacrifice the absolute privacy Apple claims to provide.

Still Behind Google Assistant

Siri’s improvements are real. iOS 26.4 achieves 87% accuracy on multi-turn conversational tasks, up from 52% in iOS 25—a 35 percentage point gain. Conversational memory extends from 2-3 turns to 50 turns. SiriKit expands from 120 intent categories to 340+. On-screen context awareness lets Siri extract restaurant details from Safari or flight information from email without manual input.

But Google Assistant still scores 91% accuracy in the same benchmarks. Siri uses Google’s AI and still trails Google’s product by 4 percentage points. Alexa lags behind both at 73%, but the gap between Siri and the industry leader remains. If Apple outsourced intelligence to Google, why isn’t Siri as good as Google Assistant?

Developer Fragmentation

iOS 26.4’s rollout has been messy. Apple delayed the beta three times—from January to February to late March 2026—after internal testing revealed Siri cutting users off mid-sentence, struggling with complex requests, and processing queries incorrectly. Developers who planned app features around enhanced Siri capabilities now face uncertainty.

The promised SiriKit API enhancements won’t arrive all at once. Apple is shipping them piecemeal across iOS 26.5 (May) and iOS 27 (September), creating fragmentation. iOS 27 will introduce “Extensions,” allowing Siri to use multiple AI providers—Gemini, ChatGPT, Claude—giving users choice but forcing developers to support multiple backends.

It’s been 18 months since Apple announced Siri’s AI upgrade at WWDC 2024. The delays signal that integrating Gemini into Apple’s ecosystem is harder than expected, raising questions about long-term maintainability.

Strategic Admission

The Gemini partnership reveals Apple’s AI strategy: treat models as commodity infrastructure, not competitive advantage. Apple isn’t trying to build the best language model. It’s building the best integration layer and ecosystem, then renting intelligence from whoever offers the best terms. iOS 27’s multi-provider Extensions system confirms this: AI models are interchangeable backends, like cloud storage providers.

This approach has advantages. Apple avoids the capital expenditure and talent wars required to compete with Google, OpenAI, and Anthropic in model development. It can switch providers if better options emerge. But it creates strategic dependency on competitors who control the intelligence layer of Apple’s products.

For now, Siri works better than it ever has. Whether that’s enough to compete with Google Assistant—or justify the privacy tradeoffs—depends on whether users trust Apple’s claims that 10% of their queries to Google are truly anonymous. Apple chose pragmatism over vertical integration. Time will tell if that was the right call.