Anthropic sued the Trump administration on March 9, 2026, after the Pentagon designated the AI company a “supply chain risk”—a label historically reserved for foreign adversaries like Huawei. The dispute centers on two red lines Anthropic refused to cross: their Claude AI cannot be used for mass surveillance of U.S. citizens or autonomous weapons systems. Defense Secretary Pete Hegseth demanded “all lawful purposes” access, calling Anthropic’s refusal “a cowardly act of corporate virtue-signaling.” Hours after the blacklisting, OpenAI signed a Pentagon deal with no such restrictions. Users responded by uninstalling ChatGPT and pushing Claude to #1 on the Apple App Store.

This is the first major clash between AI safety principles and national security demands. Anthropic chose principles over hundreds of millions in revenue. OpenAI chose revenue over ethical limits. Developers now face a choice: support companies that set ethical boundaries or those that prioritize government contracts.

“All Lawful Use” Is a Blank Check for Surveillance and Autonomous Weapons

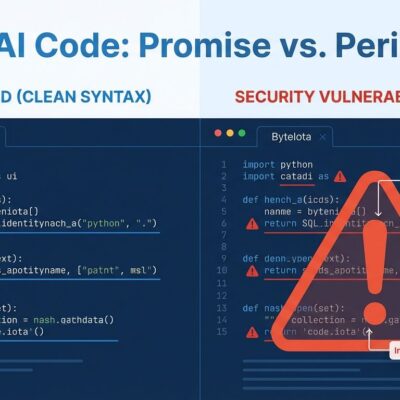

The Pentagon’s “all lawful purposes” demand sounds reasonable—until you examine what’s actually lawful under current U.S. law. PATRIOT Act Section 215 allows bulk collection of phone records on millions of Americans. FISA amendments permit warrantless monitoring of communications. Predictive policing algorithms that disproportionately target minorities are legal. Autonomous weapons systems with minimal human oversight face no federal ban.

“All lawful use” means AI can analyze bulk commercial data on Americans—addresses, purchases, movements—without judicial oversight. It means AI-powered predictive policing and autonomous drone targeting decisions. As MIT Technology Review noted, “As long as the government collects information lawfully, it can do whatever it wants with that information, including feeding it to AI systems. The law has not caught up with technological reality.”

International humanitarian law contains no express ban on autonomous weapons. The UN calls them “politically unacceptable and morally repugnant,” but legality persists. When Anthropic refused “all lawful use,” they weren’t blocking legitimate national security work—they were refusing to enable mass surveillance without judicial oversight and autonomous weapons without human authorization. OpenAI signed that blank check without restrictions.

Anthropic Drew Two Specific Red Lines

Anthropic set two concrete boundaries: Claude cannot be used for mass surveillance of U.S. citizens without judicial oversight, and Claude cannot power autonomous weapons systems without human authorization for lethal decisions. These aren’t theoretical concerns—they’re specific use cases the Pentagon wanted to enable.

Pete Hegseth called this stance “a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives.” The Pentagon’s position: they cannot allow a private company to dictate how they use tools in a national security emergency. Anthropic’s position: governments write laws to permit what they want—companies must draw ethical lines where laws won’t.

This isn’t about limiting all government use. Anthropic works with the DoD on non-sensitive analysis. They’re drawing lines where governments won’t: no bulk surveillance of innocent Americans, no autonomous killing decisions by AI. The question isn’t whether these capabilities are “lawful”—it’s whether they’re ethical. Lawful doesn’t mean right.

OpenAI Filled the Gap and Faced Immediate Backlash

Hours after Anthropic’s blacklisting on February 27, 2026, OpenAI announced a Pentagon deal with “all lawful purposes” access—no restrictions on mass surveillance or autonomous weapons. The community response was swift and brutal. Users uninstalled ChatGPT in droves (the “QuitGPT” trend), Claude climbed to #1 on the Apple App Store (displacing ChatGPT), and at least one OpenAI employee resigned in protest.

Caitlin Kalinowski, who led hardware and robotics at OpenAI, quit over the Pentagon deal. Her resignation statement: “Surveillance of Americans without judicial oversight and lethal autonomy without human authorization are lines that deserved more deliberation than they got.” Even OpenAI employees recognized this crossed an ethical boundary.

The backlash extended beyond users. Thirty-plus employees from OpenAI and Google DeepMind, including Google chief scientist Jeff Dean, filed an amicus brief supporting Anthropic’s lawsuit. Their warning: a Pentagon blacklist of Anthropic threatens to damage the entire American AI industry. OpenAI’s opportunism demonstrated the stakes—companies either draw ethical lines and lose revenue, or sign blank checks and lose user trust. The market responded decisively: users are voting with subscriptions based on vendor ethics, not just features.

Related: AI Coding Tools Hit 73% Adoption But Developers Don’t Trust

Financial Stakes Prove This Isn’t Virtue-Signaling

Anthropic’s lawsuit claims the Pentagon designation could cost the company “hundreds of millions or billions” in revenue. They’ve already lost enterprise customers and seen contracts shortened due to uncertainty about their government status. This isn’t cheap posturing—Anthropic chose principles over a $200M+ Pentagon contract and massive enterprise revenue.

Legal experts say Anthropic has a strong case. The “supply chain risk” designation has never been used against a domestic company—it’s reserved for foreign adversaries like Huawei. Lawfare’s analysis: “Pentagon’s designation won’t survive first contact with legal system.” Anthropic filed federal lawsuits in two jurisdictions within 72 hours of the blacklisting.

When critics call this “virtue-signaling,” remember the math: Anthropic is risking hundreds of millions. They’re fighting in federal court. They’re losing contracts today. This is what actual principles look like—costly, litigious, and willing to sacrifice revenue rather than compromise on ethics. Actions speak louder than press releases.

Developers Should Support Companies That Draw Ethical Lines

Developers face a decision: support AI companies that set ethical boundaries (Anthropic) or companies that prioritize government contracts without restrictions (OpenAI). The community is already voting—Claude hit #1 on the App Store, ChatGPT faces uninstalls, developer discussions favor Claude for “serious engineering work.”

This matters because vendor ethics affect YOUR tools, YOUR reputation, and YOUR complicity in surveillance and autonomous weapons systems. When you choose ChatGPT, you’re choosing a company that signed a blank check for mass surveillance and autonomous weapons. When you choose Claude, you’re choosing a company that refused to compromise despite losing hundreds of millions.

The parallel to Google’s Project Maven is instructive. In 2018, 3,000+ Google employees protested DoD contracts for AI-powered drone surveillance. Google withdrew. Amazon and Microsoft won $50M in similar contracts. Microsoft even removed policies restricting military AI use. The difference now: Anthropic set ethical boundaries from the start and sued when blacklisted. They’re fighting, not retreating.

ByteIota’s stance is clear: Anthropic is right. OpenAI is wrong. “All lawful use” is a blank check for surveillance and autonomous weapons. PATRIOT Act bulk collection is “lawful.” Predictive policing targeting minorities is “lawful.” Autonomous drone strikes are “lawful.” Lawful doesn’t mean ethical. Developers who care about AI safety should choose tools from companies that refuse to compromise on ethics, even when it costs billions. Your subscription dollars are votes—use them.

Key Takeaways

- Anthropic sued the Pentagon on March 9, 2026, after being blacklisted for refusing “all lawful use” terms that would enable mass surveillance of Americans and autonomous weapons without human authorization.

- “All lawful use” is a blank check—PATRIOT Act bulk surveillance, FISA warrantless monitoring, predictive policing, and autonomous weapons are all “lawful” but ethically questionable. The law hasn’t caught up with AI capabilities.

- OpenAI signed a Pentagon deal hours after Anthropic’s blacklisting with no ethical restrictions. Users responded with the “QuitGPT” trend, pushing Claude to #1 on the App Store, and at least one OpenAI employee (robotics lead) resigned in protest.

- Financial stakes ($200M+ contract lost, “hundreds of millions or billions” at risk) prove this isn’t virtue-signaling—Anthropic filed federal lawsuits within 72 hours and legal experts say they have a strong case.

- Developers should support companies that draw ethical boundaries. Your tool choice matters—it’s a vote for companies that refuse to compromise on principles (Anthropic) vs those that prioritize revenue over ethics (OpenAI).

The outcome of this lawsuit will set precedent for whether AI companies can draw ethical lines or if governments write blank checks. Anthropic chose principles. OpenAI chose profit. Developers are voting with subscriptions, and Claude is winning. Choose your tools—and your values—accordingly.

— ## Categories & Tags **Suggested Categories:** – AI Development (primary) – Tech Policy (secondary) **Suggested Tags:** – Anthropic – Claude – OpenAI – Pentagon – AI ethics – national security – autonomous weapons – mass surveillance – AI safety – tech policy — ## Content Summary **Type:** Opinion (with news hook) **Word Count:** 982 words **Tone:** Assertive, opinionated, evidence-based