AIRI hit GitHub Trending #5 today (March 6, 2026) with 3,006 stars, bringing self-hosted AI companions to developers who want privacy and control. Inspired by Neuro-sama, the AI VTuber with millions of fans, this open-source platform combines real-time voice chat, autonomous gaming in Minecraft and Factorio, and 2D/3D character animation—all running on your hardware via WebGPU and WebAssembly. Unlike Character.AI or JanitorAI, which lock your data in their clouds, AIRI gives you complete ownership and supports 25+ LLM providers, from OpenAI to local models via Ollama.

What Makes AIRI Different: Gaming Meets Voice and Animation

AIRI isn’t another chatbot. It’s an AI companion that plays Minecraft and Factorio alongside you through natural language commands. Tell it to “build a 3×3 cabin” and watch it autonomously plan paths, gather resources, and complete construction. This gaming integration, powered by the merged airi-minecraft package, demonstrates agent capabilities most AI companions can’t match.

The voice pipeline delivers sub-500ms latency: voice activity detection catches your speech, speech-to-text converts it, the LLM processes it, and text-to-speech synthesizes a response faster than you’d notice. Add Live2D (2D anime characters) or VRM (3D models) with automatic blinking and emotion expression tied to conversation context, and you have an immersive companion that feels genuinely interactive.

Character.AI and JanitorAI offer text chat. SillyTavern lacks animation and gaming. AIRI is the only platform combining all three—voice, gaming, and visual characters—for developers building truly interactive AI experiences.

WebGPU Brings AI to Your Browser

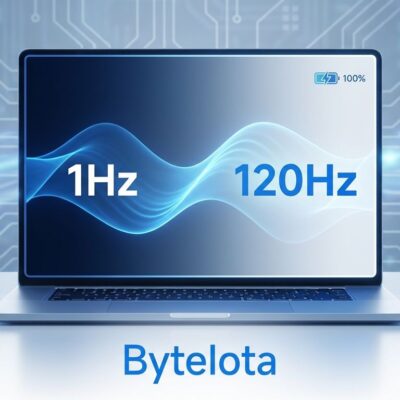

AIRI’s web-first architecture runs directly in modern browsers using WebGPU for GPU acceleration and WebAssembly for near-native performance. The inference pipeline flows through WebAssembly tokenization, WebGPU processing, and WebAssembly sampling—no installation required. This matters because emerging web standards are shifting GPU economics from servers to clients, and AIRI demonstrates what that future looks like.

Desktop apps built with Tauri add native CUDA (NVIDIA) and Metal (Apple) acceleration through HuggingFace’s Candle framework, bypassing complex Python dependencies. You get three deployment options: Stage Web (browser), Stage Tamagotchi (desktop), and Stage Pocket (mobile PWA). The unified xsai adapter lets you swap between 25+ LLM providers—OpenAI, Claude, DeepSeek, or local models—without touching application logic.

This isn’t theoretical. You can experiment in a browser today, then upgrade to desktop when you need serious performance. WebGPU support in Chrome and Edge makes this practical now, not someday.

Setup AIRI in 15 Minutes

Getting AIRI running requires Node.js 22+ and pnpm. Clone the repository, install dependencies, configure your environment, and launch:

git clone https://github.com/moeru-ai/airi.git

cd airi

pnpm install

cp .env.example .env

# Add OpenAI API key in .env

pnpm dev

# Access at http://localhost:5173For privacy-focused developers who want zero cloud dependency, install Ollama for local model inference:

curl -fsSL https://ollama.com/install.sh | sh

ollama pull llama3.2

# Configure AIRI to use localhost:11434The web version works immediately. For better performance with larger models, run the desktop version:

pnpm dev:tamagotchiThis unlocks native GPU acceleration—CUDA on NVIDIA cards, Metal on Apple Silicon—for faster inference without the overhead of browser sandboxing.

Related: AI Agent Frameworks 2026: LangChain vs CrewAI vs AutoGen

Self-Hosted Privacy Challenges Cloud AI Platforms

AIRI’s core philosophy is data ownership. All conversations, memories, and personal interactions stay on your hardware—local machine or your own cloud infrastructure. You choose which LLM provider to use: cloud APIs like OpenAI and Claude for quality, or local models via Ollama for complete privacy. Character.AI stores everything in their cloud, raising privacy concerns. JanitorAI requires cloud LLM APIs, giving you partial control. AIRI gives you the full stack.

The privacy argument isn’t abstract. Post-ChatGPT data concerns are real. Developers and organizations bound by GDPR compliance or internal policies can’t send sensitive data to third-party clouds. AIRI solves this by putting you in control—no vendor lock-in, no data leakage, no subscription dependencies if you run local models.

Local inference has trade-offs. Cloud APIs respond in 200-500ms with high quality. Local models on decent GPUs take 500ms to a few seconds, with quality depending on model size. The smart approach? Hybrid: cloud for first impressions, local for follow-ups and sensitive conversations.

Use Cases: From Gaming to VTubing to Privacy

AIRI targets multiple audiences. Privacy-focused developers get a self-hosted AI companion with no cloud dependency. Minecraft and Factorio players gain an AI assistant that autonomously executes commands like “collect 10 diamonds” or “build a house.” VTuber creators inspired by Neuro-sama can deploy AIRI with Live2D characters and stream interactions. AI researchers exploring autonomous agents and memory systems have an open TypeScript codebase to modify and extend.

The project is early stage—long-term memory system Alaya and the community plugin API are works in progress. But the GitHub community is active: 3,006 stars gained today, ongoing discussions, and first-time contributors shipping improvements. If you’re comfortable with Node.js and pnpm setup, AIRI offers capabilities you won’t find elsewhere.

Key Takeaways

- AIRI combines self-hosted privacy, real-time voice chat, autonomous gaming (Minecraft/Factorio), and 2D/3D character animation—a unique feature set no cloud platform matches

- WebGPU and WebAssembly enable browser-based AI inference without installation, while desktop apps add native GPU acceleration for serious workloads

- Setup takes 15 minutes with Node.js and pnpm, supporting 25+ LLM providers from OpenAI to local Ollama models

- Self-hosted architecture gives you complete data ownership, addressing privacy concerns that plague cloud AI services

- The project is early stage but actively developed, trending on GitHub today with 3,006 stars and a growing community

If you want an AI companion you truly own—one that plays games, speaks naturally, and keeps your data private—AIRI is your best option in 2026. Get started at github.com/moeru-ai/airi.