Sonar’s 2026 State of Code Developer Survey, released in January 2026 after surveying 1,100+ developers, reveals a critical paradox: AI coding tools now generate 42% of all committed code—expected to reach 65% by 2027—yet 96% of developers don’t fully trust this output, and only 48% consistently verify it before committing. While GitHub Copilot users complete individual tasks 55% faster, organizations experience limited end-to-end throughput improvement as larger pull requests, higher review costs, and increased incidents create second-order effects that slow delivery pipelines.

This “verification gap” represents the defining challenge of AI-assisted development. Developers identified “reviewing and validating AI-generated code” as the number one skill needed in the AI era (47%), ahead of even prompt engineering (42%). With AI-generated code containing 1.7× more issues than human code and 40-62% containing security or design flaws, organizations that fail to build verification infrastructure risk accumulating technical debt that could cost 4× traditional maintenance levels by year two.

The Trust-Verification Gap: 96% Distrust, 48% Verify

The numbers expose the core problem: 72% of developers use AI coding tools daily, yet 96% don’t fully trust the output. Despite this near-universal distrust, only 48% always verify code before committing. This isn’t a small gap—it’s a chasm where untrusted code enters production systems without adequate validation.

Nearly all developers (95%) spend at least some effort reviewing AI code, with 59% rating this effort as “moderate to substantial.” More striking: 38% report that AI-generated code requires MORE review effort than human-written code, directly contradicting the productivity narrative. The verification process hasn’t scaled with generation speed, creating a bottleneck that shifts work from authorship to validation.

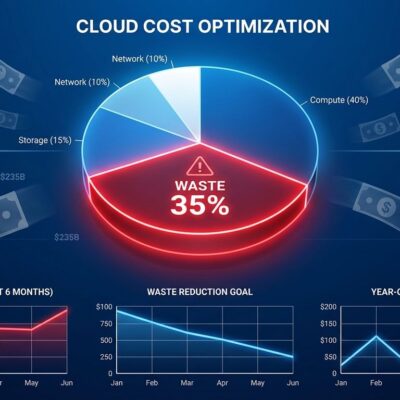

Additionally, 35% of developers use personal AI accounts rather than work-sanctioned ones, creating governance blind spots for security and compliance teams. Organizations lack visibility into what code is being generated, how it’s being verified, and what risks are accumulating in their codebases.

The Productivity Paradox: Fast Individuals, Slow Organizations

AI tools deliver impressive individual gains—55% faster task completion according to GitHub’s 4,800 developer study, with 35% average productivity boosts and 54% of developers reporting higher job satisfaction. However, organizations see limited improvement in end-to-end delivery throughput, and some studies show near-zero gains at the system level.

The disconnect stems from second-order effects. Furthermore, AI adoption correlates with 18% larger pull requests, 24% higher incident rates per PR, and 30% increased change failure rates. These aren’t incidental—they’re structural. Faster code generation creates more code to review, more bugs to catch, and more incidents to handle. Consequently, the bottleneck has shifted from writing to verification, and traditional velocity metrics mask this systemic slowdown.

Engineering leaders focusing on individual developer productivity miss the organizational impact. When code generation outpaces review capacity, the system slows down regardless of how fast individuals write code. This explains why enterprises with 90% Fortune 100 adoption rates still struggle to see proportional delivery improvements.

Related: VS Code 1.110 Agent Plugins: AI Coding Matures with MCP

The Quality Crisis: 1.7× More Issues, 4× the Cost

AI-generated code contains 1.7× more total issues than human-written code, with maintainability problems 1.64× higher. Moreover, between 40-62% of AI code contains security or design flaws, and vulnerability likelihood runs 20-30% higher than human-authored baselines. These aren’t edge cases—they’re measurable patterns across production systems.

The technical debt compounds rapidly. Code churn is doubling in AI-assisted development, while copy-pasted code rises 48%. AI tools replicate patterns from existing codebases, including bugs, spreading them across multiple files. In fact, by year two, unmanaged AI code can drive maintenance costs to 4× traditional levels as accumulated debt demands remediation.

By 2026, 75% of technology decision-makers face moderate to severe technical debt from AI-speed practices. The “move fast” approach without verification infrastructure creates long-term liabilities that exceed short-term productivity gains. Organizations shipping AI code without quality gates are mortgaging future maintainability for current velocity.

Building Verification Infrastructure: From Generation to Validation

Successful teams have shifted investment from code generation to verification infrastructure. The most effective approach layers automated checks with focused human review, treating AI code as untrusted input that requires validation before merge.

Teams that handle AI code volume effectively implement verification-first frameworks:

## Intent

[1-2 sentences: What does this PR do and why?]

## Proof It Works

- [ ] All tests passing (new tests for new functionality)

- [ ] Manual testing completed: [steps taken]

- [ ] Screenshots/logs attached: [evidence]

## Risk Assessment

- AI-generated sections: [identify which parts]

- Security-sensitive changes: [flag if applicable]

- External dependencies: [note new integrations]

## Focused Review Areas

- Business logic validation: [where human judgment critical]

- Architecture implications: [system-level impacts]

- Security considerations: [authentication, authorization, data handling]Best practices center on proof-of-work requirements. Strong testing culture with >70% automated coverage. Organizational code standards enforced automatically in CI/CD pipelines. Additionally, architectural constraints limiting PRs to under 500 lines to avoid AI reviewer context window overload. No PR merges without tests, working demos, or documented proof of functionality.

The emerging pattern: organizations can’t rely on manual code review alone. Verification infrastructure—automated testing, quality gates, enforced standards—becomes as critical as development infrastructure. Teams that invest in verification systems can safely scale AI adoption. Those that don’t will drown in technical debt.

The Skill Shift: From Writing Code to Verifying It

The most valuable developer skill has fundamentally shifted. When surveyed about AI-era competencies, 47% ranked “reviewing and validating AI-generated code for quality and security” as the top skill, ahead of prompt engineering (42%). Therefore, this represents a paradigm shift in what it means to be a software developer.

With 64% of developers now using AI agents (25% regularly, 39% experimented), and GitHub Copilot reaching 20M+ users across 90% of Fortune 100 companies, the developer role is evolving from primary code author to AI code editor and validator. Focus shifts from writing to reviewing, from creating to architecting, from implementation to verification.

This has profound implications for developer training, hiring, and career paths. Junior developers may need to learn verification before authorship. Senior engineers become verification bottlenecks as the most qualified reviewers. Organizations must rethink productivity metrics when the primary activity shifts from writing to validating code generated by AI.

Key Takeaways

- The trust-verification gap is the defining challenge of AI coding: 96% of developers distrust AI output, yet only 48% consistently verify before committing, creating a dangerous pattern where untrusted code enters production systems.

- Individual productivity gains (55% faster tasks) don’t translate to organizational throughput due to second-order effects: +18% larger PRs, +24% more incidents, +30% higher change failures—the bottleneck shifted from writing to verification.

- AI code quality is measurably worse: 1.7× more issues, 40-62% contain security flaws, 20-30% higher vulnerability rates, and unmanaged AI code can cost 4× traditional maintenance levels by year two.

- Verification infrastructure is not optional—successful teams layer automated testing (>70% coverage), code standards enforcement, PR size limits (<500 lines), and proof-of-work requirements to safely scale AI adoption.

- The most valuable developer skill shifted from code authorship to verification and validation (47% cite this as #1 AI-era skill), fundamentally changing what it means to be a software developer.

As AI code generation races toward 65% of all committed code by 2027, the question is no longer whether to adopt AI coding tools, but whether organizations are building the verification infrastructure to handle this volume safely. The competitive advantage has shifted from generation speed to verification quality.