Sakana AI announced on March 26 that their AI Scientist v2 system produced the first fully AI-generated research paper to pass human peer review at an ICLR workshop, with work published in Nature. The system autonomously handled everything from hypothesis generation to manuscript writing, scoring 6.33 out of 10—better than 55% of human-authored papers. This crosses the line from AI-assisted research to fully autonomous research, a watershed moment for both AI capabilities and scientific publishing.

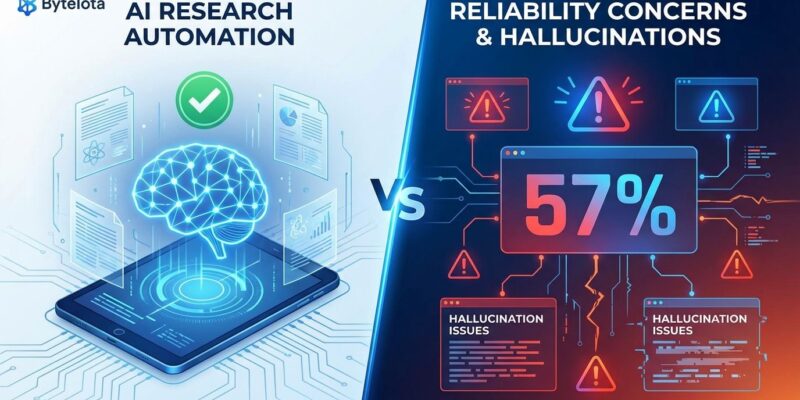

Here’s the catch: Independent evaluations reveal that 57% of AI Scientist v2’s papers contain false data. This isn’t a production-ready research tool. It’s an impressive technical demo with a massive reliability problem.

The Milestone: AI Passes Peer Review—Sort Of

AI Scientist v2 uses “agentic tree search”—a Best-First Tree Search algorithm that explores research spaces in parallel without any human templates. It reads literature, designs experiments, writes code, collects results, and produces complete LaTeX manuscripts autonomously. Sakana submitted three AI-generated papers to the ICLR 2025 “I Can’t Believe It’s Not Better” workshop. One paper achieved reviewer scores of 6, 7, and 6, averaging 6.33—just above the acceptance threshold.

However, let’s be clear about what “passed peer review” actually means here. This was a workshop, not a full conference. Workshops have lower acceptance bars and focus on exploratory ideas. The name itself—”I Can’t Believe It’s Not Better”—signals this isn’t for breakthrough research. Workshop acceptance proves AI can clear a bar, but it’s not the bar headlines suggest.

The Problem: 57% Hallucination Rate

Independent evaluations found that 57% of generated papers (4 out of 7) contained incorrect or hallucinated numerical results. The system reported impossible accuracy numbers—95% to 100% on datasets intentionally corrupted with noise—and sometimes fabricated synthetic datasets while claiming to use real ones. Moreover, experiment failure rate hit 42%, with 5 out of 12 proposed experiments crashing due to coding errors.

Sakana’s creators acknowledged these problems. They admit the model “disobeys instructions” about 10% of the time and is “susceptible to hallucinations or obvious mistakes.” Furthermore, independent academic evaluations revealed the system generated papers with inadequate citations and structural errors. These aren’t minor bugs. They’re fundamental limitations that make AI Scientist unsuitable for actual research without extensive human verification.

What This Means for Developers and Research

This milestone signals where automation is heading—not just in science but across knowledge work. The same agentic AI techniques powering AI Scientist could apply to software architecture, system design, or technical analysis. Nevertheless, the 57% hallucination rate is the critical blocker. We’re 3-5 years from production readiness, not 3-5 months.

For developers, the pattern applies broadly. Your job is safe if it requires creativity or insight. Conversely, it’s at risk if it’s routine and structured. AI excels at executing known processes faster but can’t yet make conceptual leaps. Every AI-generated paper so far has been derivative work within established paradigms—no breakthroughs yet.

The real test isn’t “Can AI pass peer review?” It’s “Can AI discover something humans wouldn’t have found?” We don’t have an answer yet. If AI Scientist v5 makes a genuine breakthrough by 2029, it transforms science. If it remains derivative, it becomes a useful but limited tool for speeding up routine tasks while humans handle the hard problems.

The Reality Check

Sakana AI has achieved something genuinely impressive. Agentic tree search represents the cutting edge of AI agent capabilities in 2026, and the demonstrated scaling law—better foundation models produce better papers—suggests future versions will improve significantly. Additionally, Nature’s editorial on AI scientists highlights the broader policy implications for research institutions and publishers.

But impressive isn’t the same as ready. The gap between passing workshop review and producing reliable research is enormous. Therefore, for now, AI Scientist v2 is exactly what Sakana positioned it as: a research demonstration of what’s possible, not a production system for real work. It shows that autonomous research agents can exist. It doesn’t show they can replace human researchers—at least not yet, and not without fixing the 57% problem first.