AI Copilot Makes Developers 19% Slower: The Data

Eighty-four percent of developers use AI coding assistants, convinced they’re coding faster. A rigorous 2025 study of experienced developers found the opposite: they completed tasks 19% slower with AI. Most revealing? After experiencing the slowdown, developers still believed AI had improved their speed by 20%. That 39-percentage-point gap between perception and reality might be the most expensive delusion in software development right now.

The Productivity Illusion: Fast Code, Slow Development

The illusion works because AI makes the visible part of development—writing code—feel effortless. You get autocomplete suggestions, entire function bodies, boilerplate in seconds. It feels productive. However, the problem is everything that happens after.

According to the METR study, developers accepted fewer than 44% of AI suggestions. The other 56% needed major revisions. That’s not a time-saver—that’s technical debt on credit. Worse, developers spent 9% of their total working time just reviewing and fixing AI outputs. Consequently, that’s roughly four hours every week cleaning up after your “productivity” tool.

The Uplevel study of 800 developers put numbers to the damage: teams using GitHub Copilot introduced 41% more bugs while showing zero improvement in pull request throughput. You’re not coding faster. You’re introducing bugs faster.

The “Almost Right” Trap That Wastes More Time Than It Saves

Sixty-six percent of developers cite the same frustration: “AI solutions that are almost right, but not quite.” Forty-five percent say debugging AI-generated code takes longer than writing it manually. This is the core problem with AI coding assistants—they don’t fail cleanly. They generate code that looks correct, passes a quick glance, and then breaks in subtle, time-consuming ways.

Code that’s 90% correct creates 100% of the debugging burden. It’s worse than starting from scratch because you inherit AI’s assumptions, its architectural choices, its edge case blindness. Moreover, you’re not writing your own code anymore—you’re reverse-engineering someone else’s half-formed thoughts.

The Technical Debt Bomb: 30-41% Growth in 90 Days

GitClear analyzed 211 million lines of code and found a disturbing pattern: technical debt grows 30-41% within 90 days of AI adoption. Code clones increased 4x. Refactoring—the practice of improving existing code—dropped 60%, from 25% of changed lines in 2021 to under 10% in 2024. Copy/paste code rose from 8.3% to 12.3%. Furthermore, static analysis warnings jumped 4.94x while code complexity increased 3.28x.

This is the hidden bomb under your codebase. AI optimizes for “add new code fast,” not “maintain code well.” Every AI-generated function is a small loan you’re taking out against future maintainability. The interest compounds. Three months after adoption, you’re drowning in duplicated logic, unmaintainable spaghetti, and architectural debt you didn’t even realize you were accumulating.

The worst part? You don’t see it happening. Quality degrades gradually. By the time you notice the codebase has become a mess, the damage is done.

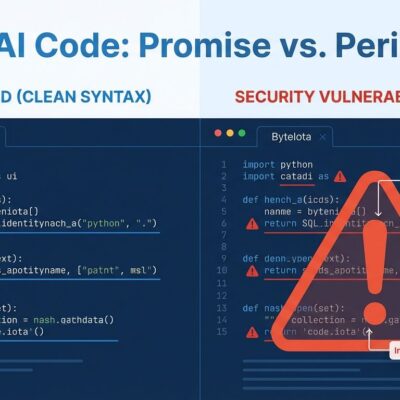

The Security Crisis Nobody Wants to Acknowledge

Forty-eight percent of AI-generated code contains security vulnerabilities. Apiiro’s 2024 research found AI code had 322% more privilege escalation paths and 153% more design flaws than human-written code. Hard-coded credentials and API keys—the kind of mistake you’d catch in a junior developer’s first PR—show up 40% more often in AI-assisted code.

This isn’t surprising. AI doesn’t understand security. It pattern-matches on code it’s seen before, including insecure code. It has no concept of threat models, no understanding of what data should be trusted, no sense of privilege boundaries. It writes code that works—until an attacker finds the hole.

The Trust Gap: Using Tools We Don’t Believe In

Here’s the strangest part of this story: 96% of developers admit they don’t “fully” trust AI-generated code. Only 3% report high trust. Yet 73% of engineering teams use AI tools daily, up from just 18% in 2024. Similarly, developer trust in AI has declined from over 70% in 2023-2024 to 60% by 2025. Nevertheless, adoption keeps climbing.

We’ve created a codependency relationship with tools we don’t trust. Convenience beats judgment. The illusion of productivity overrides the evidence of harm. It’s easier to accept the autocomplete than to question whether it’s making you better.

When AI Actually Helps (And When It Destroys Value)

AI coding assistants aren’t useless. They excel at the grunt work: boilerplate generation, configuration files, syntax conversion between languages, documentation templates. Repetitive patterns where context doesn’t matter and mistakes are obvious.

They fail catastrophically at everything that requires judgment: complex architectural decisions, business logic implementation, security-critical code, performance-sensitive operations, anything requiring deep understanding of your system. The METR study found that experienced developers working on familiar codebases—real-world conditions—were hit hardest by AI’s limitations. As one developer noted: “AI doesn’t pick the right location to make edits” and “made some weird changes in other parts” of the code.

AI is a junior developer at best. It can handle the repetitive tasks you’d delegate to an intern. However, you wouldn’t let an intern design your authentication system or refactor your core business logic. So why are we letting AI do it?

The Verdict: Speed Is Not Productivity

Writing code quickly and building software productively are not the same thing. AI makes you feel fast because it generates text quickly. But research on AI’s impact on code quality shows you’re 19% slower overall when you account for the bugs, the security holes, the technical debt, and the maintenance burden.

The real productivity metric isn’t how fast you write code. It’s how fast you ship reliable, maintainable software. By that measure, AI coding assistants are making most developers worse, not better.

Bill Harding, founder of GitClear, put it plainly: “AI code assistants excel at adding code quickly, but they can cause ‘AI-induced tech debt.'” The code they generate today becomes the problem you’re debugging next quarter.

If you’re using AI for boilerplate and learning new APIs, you’re probably fine. If you’re accepting AI suggestions for anything that matters—architecture, logic, security—you’re likely shipping technical debt faster than you realize. Comprehensive productivity statistics and developer discussions about the productivity paradox all point to the same conclusion: the emperor has no clothes, and we’re all pretending we don’t notice.