AI coding tools have hit a critical mass in 2026: 73% of engineering teams use them daily—up from just 18% in 2024—and 92% of developers use AI somewhere in their workflow. GitHub Copilot alone generates 46% of code written by developers. Yet trust remains strikingly low: only 29-46% of developers trust AI outputs, with 96% admitting they don’t “fully” trust AI-generated code. This paradox—massive adoption paired with minimal trust—reveals a fundamental tension in modern software development.

Developers are caught between productivity pressure and professional responsibility. They’re using tools they don’t trust, creating code at scale without confidence in quality or security. With 42% of all code now AI-generated and security studies showing 40-62% of AI code contains flaws, this isn’t sustainable.

Mainstream Adoption, Minimal Trust

The numbers tell a contradictory story. Daily AI coding tool usage exploded from 18% in 2024 to 41% in 2025, reaching 73% in 2026—one of the fastest developer tool adoption curves in history. Simultaneously, the Stack Overflow 2025 Developer Survey of 49,000+ respondents found that while 84% use AI tools, positive sentiment dropped from 70%+ in 2023-2024 to just 60% in 2025. More striking: 46% of developers actively distrust AI tool accuracy, up from 31% in 2024.

Trust isn’t catching up to adoption—it’s moving in the opposite direction. Only 3% of developers “highly trust” AI-generated code, and 71% refuse to merge AI code without manual review. This creates a psychological tension: developers are shipping code they fundamentally don’t believe in, not out of confidence, but out of competitive pressure, organizational expectations, or fear of falling behind peers.

Feeling Fast Doesn’t Mean Being Fast

Vendor claims paint a rosy picture: GitHub reports 55% faster task completion, while Microsoft and Google cite 20-55% productivity improvements. Developers echo these numbers, reporting they feel 20% faster with AI assistance. However, objective research reveals a perception gap. A July 2025 study by the nonprofit Model Evaluation & Threat Research (METR) showed experienced developers were actually 19% slower in controlled tests, despite believing they were 20% faster.

Bain & Company’s September 2025 report describes real-world productivity savings as “unremarkable” despite vendor hype. At the organizational level, the picture worsens: with 92.6% monthly adoption and 27% of production code AI-generated, independent research converges on roughly 10% productivity gains—far from the promised 55%. More concerningly, enterprises see a 1.5% drop in delivery throughput and 7.2% drop in stability when AI adoption increases 25%.

The bottleneck shifts rather than disappears. Code gets written faster, but review queues grow, QA becomes saturated, and security validation lags. More code doesn’t automatically mean more value—it can mean more surface area for defects and more coordination overhead.

The Illusion of Correctness

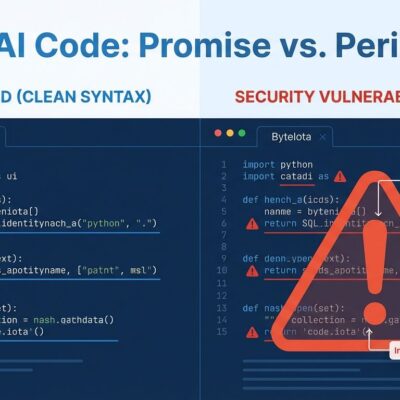

Security research exposes the real risk: 40-62% of AI-generated code contains design flaws or known vulnerabilities, introducing 15-18% more security issues than human-written code. A Stanford study found developers with AI access wrote “significantly less secure code” than those without. Yet 80% of developers wrongly believe AI code is MORE secure—a dangerous misconception that reduces scrutiny precisely when heightened vigilance is needed.

The danger isn’t obvious breakage; it’s polished-looking code that conceals serious flaws. Developers report encountering plausible code that doesn’t work, confidently wrong explanations, references to APIs that don’t exist, deprecated methods, and subtle security vulnerabilities that slip past casual review. The code looks professional, so it gets trusted. That’s the illusion of correctness.

Related: Claude Code Review: AI Agents Catch AI-Generated Bugs

Production consequences are mounting: 69% of developers and AppSec engineers discovered AI-introduced vulnerabilities in their systems, and one in five reported incidents causing material business impact. With AI-generated code projected to increase from 42% today to 63%+ by 2027, we’re scaling security vulnerabilities at unprecedented rates.

Why Developers Use Tools They Don’t Trust

If trust is so low, why is adoption so high? The answer lies in pragmatic compromise. Developers cite competitive pressure—can’t fall behind colleagues or industry expectations—as a primary driver. Organizations mandate or strongly encourage AI tool use. Meanwhile, legitimate productivity gains on specific tasks (30-60% time savings on tests, documentation, and refactoring) make the tools genuinely useful for certain workflows.

However, the frustrations are real. The top complaint, cited by 66% of developers, is “AI solutions that are almost right, but not quite”—requiring more work to fix than writing from scratch. The second frustration (45%) is that debugging AI-generated code is more time-consuming than manual coding. GitHub Copilot’s metrics illustrate the gap: it generates suggestions for 46% of code, but only 30% are actually accepted.

Developers adopt a “use but verify” philosophy, treating AI output as a junior developer’s draft rather than final code. Yet 59% admit using AI-generated code they don’t fully understand—a concerning trend that creates technical debt. The industry has collectively decided AI tools are flawed but useful assistants, developing coping mechanisms like strict code review and security scanning rather than addressing the fundamental trust deficit.

Related: Amazon AI Code Review Policy: Senior Approval Now Mandatory

Key Takeaways

- The adoption-trust gap is unsustainable: 73% daily usage paired with 46% active distrust creates professional and security risks that will force change—either trust improves through better AI models and validation tools, or a major security incident triggers industry-wide backlash.

- Productivity perception doesn’t match reality: Developers feel 20% faster but test 19% slower in objective studies, while organizational gains plateau at 10% despite 92.6% adoption. Individual task speedups don’t translate to delivery throughput when review queues, QA, and security validation become bottlenecks.

- Security vulnerabilities scale with AI adoption: 40-62% of AI code contains flaws, introducing 15-18% more vulnerabilities than human code. With AI-generated code projected to hit 63%+ by 2027, comprehensive security scanning and governance are mandatory, not optional.

- Treat AI output as draft code, never final: The “illusion of correctness”—polished code concealing serious flaws—demands treating AI suggestions like a junior developer’s work. Manual review, comprehensive testing, and security scanning are non-negotiable for production code.

- Enterprise governance offers a model: Larger organizations implementing formal review policies, gated workflows for production paths, and security validation processes see better outcomes than teams adopting AI tools without guardrails. The lesson: speed without governance creates risk.

The developer community stands at an inflection point. AI coding tools are here to stay—adoption is too widespread and competitive pressure too strong to reverse course. But the current state isn’t sustainable. Either trust must catch up through better models, validation tools, and governance frameworks, or the industry faces a security and technical debt crisis. The question isn’t whether to use AI coding tools, but how to use them responsibly while acknowledging their fundamental limitations.