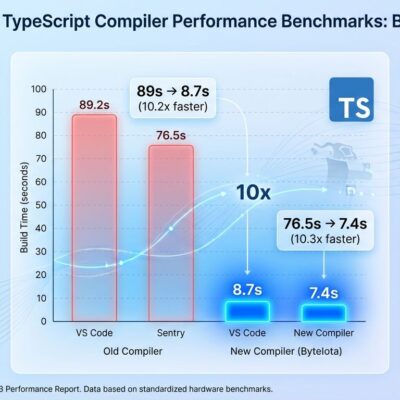

AI-generated code introduces 1.7 times more bugs than human-written code, according to CodeRabbit’s analysis of 470 GitHub pull requests published in December 2025. While 84% of developers now use AI tools that write 41% of all code, the productivity paradox is stark: teams ship code 20% faster but experience 23.5% more production incidents. Logic errors appear 1.75 times more frequently in AI code, security vulnerabilities increase by 1.57 times, and readability problems triple.

This isn’t speculation—it’s measured across production systems. Moreover, the AI coding revolution promised speed. It delivered on that promise, then quietly handed us a quality crisis.

The Productivity Paradox: 20% Faster, 23.5% More Incidents

The math is brutal. Organizations using AI coding assistants achieve 20% faster pull request velocity year-over-year. That’s real productivity—until you measure what happens after merge. Production incidents surged 23.5% over the same period. Consequently, teams are shipping more code faster, but the hidden cost is significantly higher failure rates in production systems.

The gap between claimed and measured productivity is wider than advertised. Ninety-two percent of developers report 25% productivity boosts from AI tools. Meanwhile, 45.2% admit debugging AI-generated code takes longer than human-written code. Furthermore, projects relying heavily on AI code saw a 41% increase in bugs. Speed without quality isn’t productivity—it’s technical debt with a shorter fuse.

The industry optimized for velocity metrics (deployment frequency, PR volume, cycle time) while ignoring defect density. We’re now discovering that shipping 20% more code with 23.5% more incidents isn’t the win we thought it was.

What AI Gets Wrong: Logic, Security, Performance

AI code exhibits specific, measurable weaknesses. Logic and correctness errors appear 1.75 times more often than in human code. Security vulnerabilities increase by 1.57 times overall, spiking to 2.74 times for XSS attacks. Performance regressions are stark—AI-generated code shows 8 times more excessive I/O operations, favoring code clarity over resource efficiency. Additionally, readability problems triple.

The security numbers are particularly concerning. Thirty-six percent of developers using AI assistants unknowingly introduced SQL injection vulnerabilities, compared to only 7% coding manually. Improper password handling is 1.88 times more likely in AI code. Insecure object references jump 1.91 times. These aren’t edge cases—they’re predictable failure modes.

CodeRabbit’s analysis identified a pattern: AI often omits null checks, early returns, guardrails, and comprehensive exception logic—the defensive coding practices that prevent real-world outages. AI generates surface-level correctness without proper control-flow protections. As a result, it produces code that looks right, passes initial review, then fails under edge cases you didn’t anticipate.

AI Code Quality 2026: The Industry Shift to Quality-First

“2025 was the year of speed, and 2026 will be the year of quality,” according to CodeRabbit’s December 2025 report. The industry is pivoting hard. Engineering leaders are shifting KPIs from raw throughput (velocity, deployment frequency) to quality metrics (defect density, review load, merge confidence scores, test coverage, maintainability).

Companies are now tracking AI defect metrics with the same rigor previously reserved for security incidents. SonarQube launched Agentic Analysis in March 2026, bringing real-time AI code verification directly into coding agent workflows. The tool applies repository-specific quality profiles automatically as AI generates code, closing the verification gap before merge. In addition, SonarQube’s 2026.1 release integrates with Claude Code, Cursor, Windsurf, and Gemini—recognition that AI coding tools need guardrails, not just acceleration.

The numbers driving this shift are stark. The code review automation market exploded from $550 million to $4 billion in 2025. CodeRabbit now processes over 13 million pull requests across 2 million repositories. However, analysts project a 40% quality deficit for 2026: more code entering the pipeline than reviewers can validate with confidence. The industry recognizes speed-only optimization hit a wall.

Why AI Makes These Mistakes

AI models lack local business logic understanding. They produce patterns learned from training data without comprehending repository-specific conventions or business rules. If string-concatenated SQL queries appear frequently in training datasets, AI assistants readily reproduce them—vulnerability and all. The models optimize for “looks correct” rather than “is correct under all conditions.”

This explains the “almost right but not quite” phenomenon. Sixty-six percent of developers report AI solutions fit this description. Furthermore, only 29-46% trust AI outputs. The trust gap exists because developers have learned that AI code requires more scrutiny, not less. Human and AI developers make similar mistake types; however, AI simply produces them at significantly larger scale and volume.

Using AI Responsibly: Verification, Not Just Acceleration

The 1.7 times quality gap isn’t inevitable if teams implement verification workflows. Here’s what responsible AI coding looks like in 2026:

- Use AI for boilerplate and repetitive tasks—CRUD operations, test generation, documentation, code translation between languages. Skip it for security-critical authentication logic, payment processing, and cryptographic implementations.

- Implement automated verification before merge—SonarQube Agentic Analysis, CodeRabbit, and similar tools catch AI-specific failure modes. Real-time verification as code is generated, not after deployment.

- Shift metrics from velocity to quality—Track defect density and merge confidence alongside deployment frequency. Measure both speed and reliability, not just throughput.

- Track AI-generated commits separately—You can’t improve what you don’t measure. Correlate AI commits with production incidents to understand real impact.

- Don’t trust “almost right” code in production—If you wouldn’t ship it without AI assistance, don’t ship it with AI assistance. Quality standards don’t change because generation speed increased.

AI coding tools accelerated development. They also accelerated defect introduction. Nevertheless, the next phase—AI plus automated verification—aims to deliver both speed and quality. Organizations that continue optimizing for speed alone will accumulate technical debt faster than they can service it. Therefore, quality is the competitive differentiator in 2026, not just velocity.