LangChain dominates AI agent frameworks with 47 million PyPI downloads, but performance benchmarks reveal critical tradeoffs developers need to understand. CrewAI consumes 3x more tokens than LangChain for simple tasks due to “managerial overhead,” yet deploys multi-agent teams 40% faster. AutoGen offers Microsoft-backed enterprise features but faces production readiness concerns as it potentially merges with Semantic Kernel. The framework you choose depends on your workflow: LangGraph for complex stateful processes, CrewAI for rapid role-based prototyping, AutoGen for conversational collaboration with human oversight.

Enterprises are shifting from simple RAG (question-answering) to agentic architectures where AI autonomously executes multi-step tasks. Choosing the wrong framework means either burning 3x in token costs or investing weeks in complex orchestration. Here’s what the performance data actually shows.

The 3x Token Cost: CrewAI’s Performance Tradeoff

CrewAI consumes 3x more tokens than LangChain for single tool calls and takes 3x longer, even for straightforward operations. This isn’t a bug—it’s architectural. CrewAI’s Planner and Analyst personas validate processes through multi-step self-review, creating what researchers call “managerial overhead.” Instead of immediately returning retrieved data, CrewAI repeatedly checks its own work. The cost appears regardless of task complexity.

When handling complex state persistence and data filtering, CrewAI consumed nearly 2x the tokens and took over 3x as long as LangChain. However, this thoroughness serves a purpose. CrewAI excels at complex state transitions and multi-factor decision-making where validation prevents costly mistakes. The framework’s exhaustive process justifies the overhead for mission-critical workflows—but it’s wasteful for simple agent tasks.

At scale, 3x token consumption translates directly to 3x cost. The question isn’t whether CrewAI’s validation adds value, but whether your use case justifies paying triple for it. For content production pipelines and report generation requiring quality assurance, the answer is yes. For basic data retrieval, skip it.

LangChain’s 47 Million Downloads: Ecosystem and Use Cases

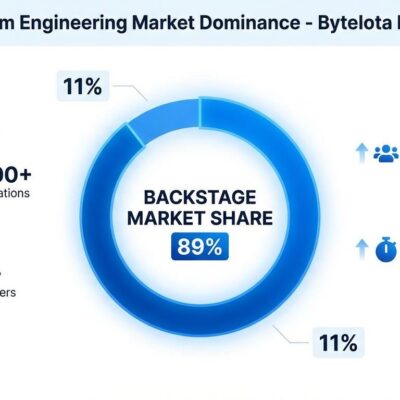

LangChain’s market dominance isn’t accidental. With 47 million PyPI downloads and 500 million+ cumulative ecosystem downloads, it offers the largest integration library and most mature community resources. LangChain’s core repository alone logged 221 million downloads in the last month. This ecosystem advantage reduces risk for production systems—when you hit edge cases, someone has already solved them.

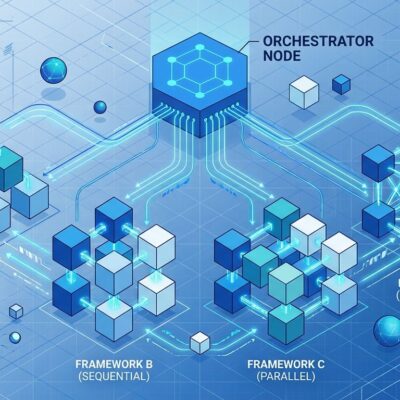

LangGraph, LangChain’s workflow orchestration tool, excels at complex stateful workflows with branching logic and conditional execution. The graph-based design enables precise control over state transitions, parallel processing, and distributed systems. Developers deploy it for complex RAG workflows that adapt based on query type, multi-tool scenarios requiring conditional tool selection, and applications scaling across multiple services.

The tradeoff is a steeper learning curve. LangChain’s multiple abstraction layers complicate custom implementations, and its extensive dependency tree requires constant updates. When you need maximum flexibility and long-term stability, LangChain’s ecosystem maturity justifies the investment. For simpler workflows, the complexity is overkill.

Related: Jido 2.0: Build Fault-Tolerant AI Agents in Elixir

CrewAI’s 40% Deployment Speed Advantage

CrewAI deploys multi-agent teams 40% faster than LangGraph for standard business workflows. This speed advantage comes from its role-based collaboration model where agents function like team members with specific responsibilities—researcher, writer, editor. If your workflow naturally maps to roles and handoffs, CrewAI makes implementation intuitive rather than forcing you to architect complex graphs.

The framework removed LangChain as a dependency in 2026, becoming more reliable as a standalone solution. It includes structured memory for agent coordination and easy human checkpoint insertion for approval workflows. CrewAI shines in content production pipelines where researchers gather data, writers create content, and editors review quality. It handles fraud detection requiring multi-agent pattern analysis and report generation with standardized formats and approval stages.

For startups and proof-of-concept work, time-to-production matters more than token efficiency. The 40% deployment speed advantage offsets higher token costs during development. Once you prove the concept, you can optimize or migrate to LangGraph if token costs become prohibitive. Start fast, optimize later beats perfect architecture that ships late.

AutoGen: Enterprise Features with Production Caveats

Microsoft’s AutoGen provides battle-tested infrastructure with advanced error handling, extensive logging, and cross-language support (.NET + Python). The three-layer API design separates concerns: Core for message passing, AgentChat for rapid prototyping, Extensions for plugins. Developer tools like AutoGen Studio (no-code GUI) and AutoGen Bench (benchmarking suite) accelerate development. For Microsoft-centric enterprises, the ecosystem integration is compelling.

However, community discussions flag a critical concern: “AutoGen is popular but not production-ready. It’s undergoing rapid changes and may merge into Semantic Kernel, forcing future migration work.” This isn’t speculation—the framework’s trajectory suggests consolidation within Microsoft’s broader AI infrastructure. AutoGen excels at conversational workflows with human-in-the-loop oversight, iterative tasks like brainstorming, and scenarios requiring Microsoft ecosystem integration.

The risk is timing. While AutoGen offers robust enterprise features now, potential framework consolidation creates migration uncertainty within 6-12 months. Developers need to weigh Microsoft backing and enterprise tooling against the possibility of forced migration. For long-term production commitments, wait until the merge status clarifies. For short-term projects or Microsoft-locked environments, AutoGen delivers value.

AI Agent Framework Selection: When to Choose Each

Framework selection depends on workflow architecture, not download counts or marketing hype. Choose LangGraph when you need complex orchestration with branching logic, precise control over state transitions, or distributed systems scaling across services. The learning curve and complexity are justified when flexibility matters more than deployment speed.

Choose CrewAI when workflows map naturally to roles and responsibilities—researcher hands off to writer, writer hands off to editor. Accept the 3x token cost in exchange for 40% faster deployment. The overhead makes sense for mission-critical validation workflows but wastes resources on simple single-agent tasks. Use CrewAI for rapid prototyping, then migrate to LangGraph if token costs become prohibitive at scale.

Choose AutoGen when conversational workflows with human-in-the-loop oversight match your architecture, or when Microsoft ecosystem integration is non-negotiable. The enterprise features and .NET support provide value for Microsoft-centric organizations. However, avoid AutoGen for long-term production commitments until framework consolidation plans clarify. Short-term projects and proof-of-concept work face less migration risk.

For simple single-agent tasks requiring straightforward tool calls, skip all three frameworks. Use direct LLM API calls instead. The framework overhead—whether LangChain’s abstractions, CrewAI’s validation, or AutoGen’s enterprise features—adds complexity without corresponding value. Match framework architecture to actual workflow complexity, not aspirational future needs.

Key Takeaways

- CrewAI’s 3x token overhead is structural, not a bug—its validation process justifies costs for mission-critical workflows but wastes resources on simple tasks

- LangChain’s 47 million downloads and massive ecosystem provide production stability and extensive integrations, offsetting its steeper learning curve for complex workflows

- CrewAI deploys 40% faster than LangGraph for role-based teamwork, making it ideal for rapid prototyping before optimizing for token efficiency

- AutoGen offers enterprise features and Microsoft integration but faces potential framework consolidation—avoid for long-term commitments until merge status clarifies

- Framework choice depends on workflow architecture: LangGraph for stateful complexity, CrewAI for role-based speed, AutoGen for conversational collaboration, direct APIs for simple tasks

Start with your workflow structure, not framework popularity. The wrong framework choice compounds over time—either in token costs that scale linearly with usage or in complexity debt that slows every feature addition. Match architecture to actual needs, not projected ones.