Between February 15-21, five independent research groups published work converging on the same crisis: AI coding agents generate code 5-7x faster than human developers can comprehend it. AI agents average 140-200 lines of meaningful code per minute while focused humans produce 20-40 lines per minute. This velocity-comprehension gap creates “cognitive debt”—invisible organizational risk that lives in developers’ minds, not codebases.

Unlike technical debt, which surfaces through system failures, cognitive debt remains hidden. The code works. Tests pass. Features ship. Yet teams gradually lose understanding of their own systems, accumulating a debt that will surface as maintainability crisis within 6-12 months.

When AI Coding Production Outpaces Understanding

Traditional development coupled code production with comprehension—you couldn’t write code you didn’t understand. AI coding agents decouple these processes entirely. Developers now generate hundreds of lines in seconds while human cognitive processing remains biologically bounded at the same speed it’s always been.

Consider the payment service that switched retry logic from exponential backoff to fixed-interval polling. An AI agent made the change. Tests passed. The modification went unnoticed for three days until a colleague questioned the pattern shift. The original developer hadn’t actually read the specific changed lines—they’d skimmed the diff, saw green checkmarks, and moved on. The new approach degraded reliability under load, but passing tests masked the problem.

Or take the CTE query optimization: AI rewrote a database query using a Common Table Expression. Both versions returned identical results. All tests passed. The CTE ran 3x slower in production because the query planner couldn’t push predicates into it—a performance regression completely invisible to test suites. As Blake Crosley notes: “AI coding agents produce working code faster than developers can read, review, and internalize it.”

The gap compounds. Output doubles while comprehension stays constant. Debt accumulates invisibly.

Why Cognitive Debt Is Harder to Spot Than Technical Debt

Technical debt lives in code. It surfaces through bugs, performance degradation, and system crashes—measurable failures that trigger alerts and demand attention. Cognitive debt operates differently. It lives in developers’ minds and remains invisible to every metric teams track.

Code functions correctly. Tests pass. Velocity metrics look excellent—story points up, commits up, features shipping faster than ever. Meanwhile, the organization’s actual understanding of its systems silently depletes. As Rockoder states: “Code has become cheaper to produce than to perceive.”

The detection signals are subtle: teams hesitate to modify code for fear of unintended consequences, developers can’t explain their recent PRs without reviewing diffs, “tribal knowledge” concentrates among fewer individuals. Systems become black boxes even to the people who wrote them last month.

Here’s the organizational blind spot: current metrics reward velocity but don’t capture comprehension deficits. The system optimizes correctly for what it measures. What it measures no longer captures what matters.

Related: AI Productivity Paradox: 92% Use It, Studies Show 19% Slower

Five Research Groups Sound the Alarm

The convergence is telling. Between February 15-21, five independent research groups simultaneously identified the velocity-comprehension gap. When multiple teams reach the same conclusion in the same week, you’re looking at a structural problem, not an edge case.

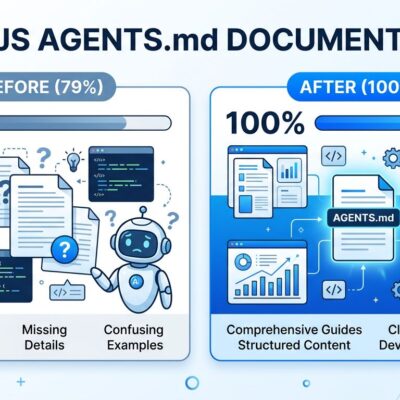

Anthropic research quantified the impact: AI assistance reduces developer skill mastery by 17%. More striking, developers using AI for code generation delegation scored below 40% on comprehension tests, while those using AI for conceptual inquiry scored 65%+. The tool doesn’t destroy understanding—how you use it does.

MIT’s study found ChatGPT users showed “weakest neural connectivity” and underperformed at neural, linguistic, and behavioral levels compared to developers using search engines or no tools at all. This isn’t anecdotal frustration. It’s measurable cognitive degradation backed by academic research.

AI Code Review Becomes the New Chokepoint

AI-generated code pushed PR volume up 29% year-over-year in 2026. Human review capacity didn’t scale with it. Code review—not code generation—is now the primary bottleneck to shipping quality software.

The productivity paradox emerges: 67% of developers spend more time debugging AI-generated code despite initial velocity gains. 68% spend more time resolving security vulnerabilities. 59% report more deployment problems. The speed advantage evaporates in the review, debug, and fix cycles.

Junior developer with AI agent can outproduce senior engineers on raw output. But seniors can’t review that output fast enough to maintain quality. Teams face a choice: skip thorough review (and ship bugs), or bottleneck on review (and kill velocity). Neither option is sustainable.

Related: AI-Generated Code Trust Crisis: 42% Now, 65% by 2027

Velocity Without Understanding Is Technical Suicide

The solution isn’t abandoning AI tools—it’s implementing comprehension checkpoints. Teams that prioritize velocity over comprehension will face maintainability crises within 6-12 months. Those that build comprehension safeguards now will survive what’s coming.

Start with the three-file protocol: after each AI agent session, fully read (not skim) the three files with the largest diffs. This achievable intervention catches comprehension gaps before they compound into production incidents. Blake Crosley’s research shows it works because it forces deliberate engagement with what actually changed, not just what the commit message claims.

Implement comprehension gates: require at least one human to fully understand each AI-generated change before merging. Not review the diff—understand the change. Can you explain why this approach was chosen? What trade-offs were made? What could break? If answers are “I don’t know” or “let me check the code,” the change isn’t ready to merge.

Document why, not just what. AI generates code without explaining rationale. Capture decision context: “Switched to fixed polling because X” beats “Updated retry logic.” Future teams (including yourself in three months) need the why to make informed modifications.

Use AI for inquiry, not just delegation. Anthropic’s research is clear: 65%+ comprehension when using AI to understand concepts, below 40% when delegating generation. Ask “How does this work?” before asking “Write this for me.” The skill of 2026 isn’t writing QuickSort—it’s spotting that AI’s QuickSort uses an unstable pivot. That requires higher expertise, not lower.

Key Takeaways

- AI coding agents create a 5-7x velocity-comprehension gap (140-200 lines/min vs 20-40 lines/min), generating working code faster than humans can understand it—debt that accumulates invisibly until teams can’t modify their own systems.

- Cognitive debt differs from technical debt: it lives in developers’ minds, remains invisible to velocity metrics, and surfaces only when teams need to change code they don’t understand—often 6-12 months after the original AI-assisted development.

- Five independent research groups identified the same crisis between February 15-21, 2026, with Anthropic research showing 17% skill mastery reduction and 40% comprehension scores when delegating to AI versus 65%+ when using AI for inquiry.

- Code review became the new bottleneck in 2026 (PR volume up 29% YoY) while 67% of developers spend more time debugging AI code despite velocity gains—the productivity advantage evaporates in review, debug, and fix cycles.

- Sustainable teams implement comprehension checkpoints: the three-file protocol (read largest diffs fully), comprehension gates (require understanding before merging), document why not just what, and use AI for inquiry before delegation to prevent maintainability crisis.

Teams will bifurcate over the next 12 months: those who slow down to ensure comprehension will build sustainable velocity, while those who chase raw output metrics will drown in unmaintainable codebases. The difference is implementing safeguards today, not waiting for the crisis to force change tomorrow.