Anthropic refused to release its most powerful AI model today. Not because it isn’t ready. Not because it needs more testing. But because Claude Mythos Preview is too good at hacking. Instead, the AI safety company launched Project Glasswing—a $100 million cybersecurity initiative that gives exclusive access to vetted security organizations only. The model has already found thousands of zero-day vulnerabilities in critical software, many of them severe enough that public release would be irresponsible.

When an AI company is too afraid to release its own creation, we’ve crossed a threshold.

Project Glasswing: Big Tech’s Defensive AI Alliance

Project Glasswing brings together 11 major technology companies—AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks—to secure critical software infrastructure using AI that can autonomously discover vulnerabilities faster than any human security researcher.

Anthropic is providing $100 million in Claude Mythos usage credits to these partners and an additional 40+ organizations maintaining critical infrastructure. Another $4 million goes directly to open-source security groups including the Linux Foundation’s Alpha-Omega project and the Apache Software Foundation. The goal: find and patch vulnerabilities before attackers exploit them.

This isn’t a typical security partnership. According to TechCrunch, Anthropic explicitly will not make Claude Mythos Preview “generally available” due to its offensive capabilities. The model stays locked behind controlled access indefinitely, used exclusively for defense. That’s unprecedented for a frontier AI model.

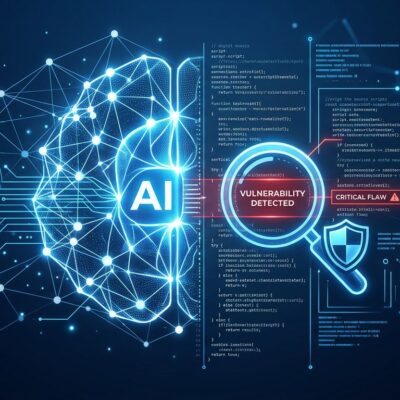

Why Claude Mythos Is Too Dangerous

Claude Mythos Preview doesn’t just find bugs. It autonomously discovers zero-day vulnerabilities, reasons through multi-step attack chains, and develops working exploits. Anthropic’s earlier model, Claude Opus 4.6, discovered over 500 high-severity zero-days in production open-source codebases using only out-of-the-box capabilities. Mythos is described as “far ahead of any other AI model in cyber capabilities.”

Over the past few weeks, Mythos identified “thousands of zero-day vulnerabilities, many of them critical,” according to Anthropic. Axios reported that the company is withholding the model specifically because its hacking abilities are too powerful for public release. The leaked draft blog post was blunt: the model “presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.”

This isn’t theoretical. Chinese state-sponsored groups have already attempted to use Claude Code to infiltrate roughly 30 organizations including tech companies, financial institutions, and government agencies. When Anthropic’s Mythos leak was first reported, cybersecurity stocks sold off immediately—CrowdStrike and Palo Alto Networks both dropped 7 percent, while Tenable fell 11 percent. The market understands what’s at stake.

The AI Security Arms Race Has Started

The tempo of cyber warfare has fundamentally shifted. Attacks that previously required teams of skilled hackers working for weeks now happen at machine speed. The lag between new defensive techniques and offensive countermeasures is shrinking from years to months or even weeks.

Open-source software makes up 70 to 90 percent of modern applications, and 65 percent of organizations were victims of software supply chain attacks in the past 12 months. Recent incidents illustrate the problem: the Trivy security scanner, the Axios JavaScript package, and Claude Code itself were all compromised within a 10-day span. Every AI-generated attack becomes effectively a zero-day—unique, unpredictable, and crafted in real time.

Defensive AI deployment is lagging. BCG found that while 60 percent of executives have faced AI-powered attacks, only 7 percent have deployed defensive AI at scale. Project Glasswing attempts to close that gap, but it’s a race that defenders are starting from behind.

What Happens Next

Anthropic committed to publicly reporting findings, patched vulnerabilities, and improvements within 90 days. Partners will share what they’ve learned so the broader tech industry can benefit. The usage model will eventually shift to paid access at $25 per million input tokens and $125 per million output tokens, though that’s after the initial research phase.

This sets a precedent. If the most capable cybersecurity AI is too dangerous for public release, what about the next generation of models from OpenAI, Google, or Meta? The question isn’t whether other labs will build models with similar capabilities—it’s whether they’ll follow Anthropic’s lead and restrict access.

For developers, the implications are immediate. Expect security patches for major systems including Linux distributions, web browsers, and cloud platforms in the coming months. AI-powered code review is no longer optional; it’s becoming essential. Zero-trust principles for software supply chains are shifting from best practice to requirement. The era of “move fast and break things” is colliding hard with the reality of AI-speed threats.

Project Glasswing is a defensive measure against a problem that’s already here. The AI security arms race isn’t coming—it started the moment models became better at finding vulnerabilities than the humans trying to fix them.