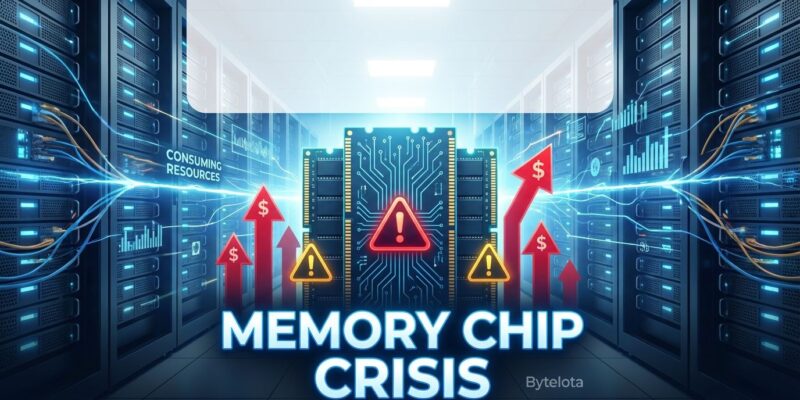

The global RAM shortage dubbed “RAMmageddon” reached crisis levels in Q1 2026, with memory prices surging 90-100% quarter-over-quarter—the largest increase on record. AI data centers now consume 70% of global DRAM production, up from just 20-30% in 2022. This isn’t a temporary blip. Industry leaders from Intel, Micron, and SK Hynix all warn the shortage will persist through 2028 at minimum, with some predicting supply constraints lasting until 2030.

If you’re waiting for prices to drop before upgrading your hardware, you’re making an expensive miscalculation.

The Math That Doesn’t Work

A single AI server consumes as much advanced memory as hundreds of traditional laptops. This isn’t hyperbole—it’s simple arithmetic. A basic AI server packs 128GB+ of DDR5 plus 80-192GB of HBM (high-bandwidth memory) per GPU. High-end configurations run 512GB to 2TB of system RAM with multiple HBM-equipped GPUs. Meanwhile, typical laptops ship with 8-16GB, and even “AI-capable” consumer machines target 16-32GB.

The semiconductor industry can’t manufacture its way out of this imbalance fast enough. Memory makers have shifted production capacity toward high-bandwidth memory required for AI accelerators, diverting resources away from consumer-grade DDR4/DDR5 and mobile LPDDR memory. The market reallocation is stark: data centers claimed 20-30% of DRAM in 2022; by 2026, that figure hit 70%.

Consequently, consumer electronics manufacturers are fighting over the remaining 30% of production. This explains why your next laptop costs significantly more than it should, and why budget hardware is vanishing from shelves.

Industry Warnings Paint a Bleak Picture

Every major industry player is issuing the same grim forecast. Intel CEO Lip-Bu Tan, after consulting with key memory manufacturers, stated bluntly in February: “There’s no relief as far as I know. There’s no relief until 2028.” Micron’s Executive Vice President of Operations called the shortage “unprecedented” in January, warning it will extend beyond 2026.

SK Hynix chairman Chey Tae-won delivered the most pessimistic timeline yet at Nvidia’s GTC conference in March: “The current shortage could continue until 2030, so we expect more than a 20% shortage of the wafers.”

These aren’t vague corporate hedges. Wafer supply is trailing demand by more than 20%, and building new fabrication facilities takes 18-24 months minimum. Micron broke ground on a $100 billion Syracuse facility in January 2026, but meaningful production won’t arrive for years. The shortage is structural, not cyclical.

Market Collapse Accelerates

Gartner projects worldwide PC shipments will decline 10.4% in 2026, with smartphone shipments falling 12.9% to 13%. Memory prices are expected to surge 130% by year-end compared to 2025 levels, increasing PC prices by 17% and smartphone prices by 13%. The sub-$500 PC segment—critical for education, emerging markets, and budget-conscious developers—will disappear entirely by 2028.

The market has become so volatile that memory procurement now operates on hourly pricing rather than daily or weekly quotes. Over 190,000 small and medium-sized electronics companies are being squeezed out of the market entirely. Furthermore, device lifetimes are extending dramatically: business buyers are holding onto hardware 15% longer, while consumers are stretching replacement cycles by 20%.

Nature reports the shortage is hitting scientific research particularly hard, with labs unable to access memory for computational models and AI research. Researchers are being forced to downsize models, optimize algorithms aggressively, and compete for expensive cloud resources that are also seeing price increases.

Desperate Measures

Elon Musk announced Tesla, SpaceX, and xAI’s “Terafab” project on March 22—a $20-25 billion chip fabrication facility targeting 100,000 wafer starts per month with 2-nanometer process technology. Musk’s rationale reveals the shortage’s severity: the global chip industry currently produces “about 2% of what Tesla and SpaceX will need” for edge inference compute.

When the world’s richest person decides building a $25 billion fab is more economical than relying on supply chains, the crisis has reached its inflection point. Tesla plans small-batch production of their AI5 chip in 2026-2027, with volume production by 2027. This signals a broader trend: companies with sufficient capital will vertically integrate to guarantee supply, leaving smaller players further disadvantaged.

Key Takeaways

- Don’t wait for prices to drop—the shortage persists through 2028 minimum, with pessimistic forecasts extending to 2030. Buying hardware now may be cheaper than waiting.

- Budget for 100%+ memory cost increases in hardware planning. Q1 2026 saw 90-100% QoQ price surges, with Q2 projected to add another 70%.

- Extend device lifetimes ruthlessly—replacement costs are prohibitive. Businesses and consumers are adding 15-20% to typical refresh cycles.

- Re-evaluate cloud vs. local economics—but remember cloud providers will pass memory costs to customers via pricing increases.

- Optimize for memory efficiency—algorithmic improvements and memory-conscious architectures matter more than ever when hardware upgrades are unaffordable.

The RAM shortage represents a permanent realignment of semiconductor production priorities, not a temporary supply disruption. AI’s appetite for memory has fundamentally restructured the market, and developers need to plan accordingly.