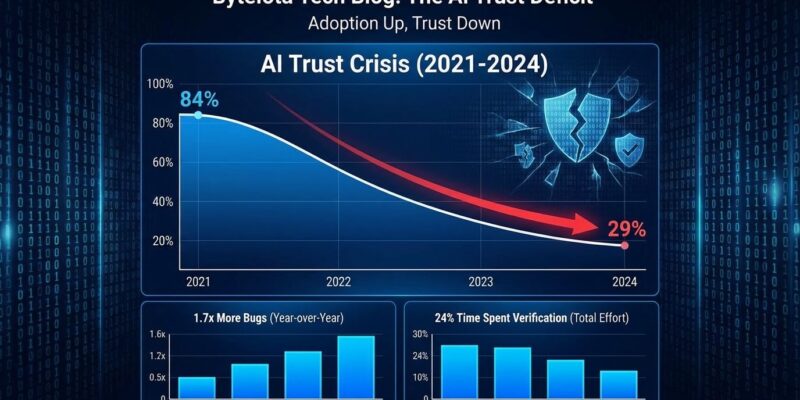

The 2026 developer landscape reveals a profound paradox: 84% of developers now use AI coding tools according to Stack Overflow’s latest survey, yet only 29% trust the accuracy of AI-generated output. That’s an 11-percentage-point drop from 2024’s 40% trust level. This defies typical technology adoption curves where familiarity breeds confidence. The more developers use AI tools, the less they trust them.

The crisis isn’t just psychological. Developers spend 24% of their work week verifying AI output, AI code contains 1.7x more bugs than human code, and tasks that feel 20% faster actually take 19% longer end-to-end. The productivity promise hasn’t materialized—it’s been swapped for a verification bottleneck.

The Verification Gap: 96% Don’t Trust, But Only 48% Verify

Sonar’s 2026 survey of over 1,100 developers exposes a dangerous disconnect: 96% don’t fully trust AI-generated code is functionally correct, yet only 48% actually verify it before committing to their codebase. Nearly half of developers are shipping unverified AI code despite knowing it’s unreliable.

The reasons for skipping verification are telling. Moreover, 38% report that reviewing AI code requires more effort than reviewing code written by human colleagues. Consequently, time pressure, toil fatigue, and false confidence conspire to create what researchers call “verification debt”—unverified AI code accumulating in production systems, waiting to surface as bugs, security vulnerabilities, or technical debt.

This isn’t theoretical risk. It’s a ticking time bomb in codebases across the industry.

AI Code Contains 1.7x More Bugs—Here’s the Data

LinearB and CodeRabbit’s analysis of 8.1 million pull requests from 4,800 engineering teams across 42 countries provides hard evidence for developer skepticism. AI-generated code averages 10.83 issues per pull request compared to 6.45 for human code—a 1.7x increase that translates directly into technical debt.

The quality gap spans every category. Logic and correctness errors appear 1.75 times more often. Security vulnerabilities rise 1.57x. Maintainability problems jump 1.64x. Performance issues increase 1.42x. Furthermore, AI-authored pull requests contain 1.4x more critical issues and 1.7x more major issues on average than human-written PRs.

Developers experience this firsthand. In fact, 66% cite “looks correct but fails during testing” as the biggest problem with AI tools. Plausible-looking code that doesn’t function. Fabricated API references. Subtle security flaws that pass initial review. Every hallucination reinforces distrust.

The Productivity Paradox: Feeling Faster, Actually Slower

Developers overwhelmingly believe AI makes them 20% faster. However, LinearB’s data tells a different story: end-to-end task completion actually takes 19% longer with AI assistance.

The culprit is verification overhead. Developers spend 24% of their work week—nearly a quarter—checking, fixing, and validating AI output. Additionally, AI-generated pull requests wait 4.6 times longer for code review than human-written ones. The promised efficiency gains evaporate during the verification stage.

This is “toil swap,” not toil reduction. The work hasn’t disappeared. It’s shifted from writing code to verifying it. Therefore, when tools slow you down in practice despite feeling fast, trust erodes. The perception gap between subjective speed (generation) and measured reality (end-to-end delivery) explains why adoption continues while trust collapses.

Why Developers Don’t Trust AI: Deterministic vs Probabilistic Thinking

The root cause is a fundamental mismatch between how developers think and how AI works. Developers are trained for determinism: same input yields same output, reproducible results, predictable behavior. Debugging requires consistency. Testing depends on it. Entire careers are built on this mental model.

In contrast, AI operates probabilistically. Same input produces varied outputs. Results are stochastic, not deterministic. Models provide confidence scores, not guarantees. Consequently, every inconsistency, every hallucination, every unexpected suggestion violates the core assumptions developers rely on.

Stack Overflow CEO Prashanth Chandrasekar captures the competence gap: “Tools in every technology category require user competence and accountability to produce good results. You better know what’s underneath the hood before you push it into production.” Developers accustomed to deterministic tools now face probabilistic assistants—and every failure mode reinforces skepticism.

This isn’t technophobia. It’s rational engineering standards applied to tools that don’t operate like traditional software.

2026: From “AI Speed” to “AI Quality”

The industry is responding to the AI trust crisis with a clear pivot. CodeRabbit’s analysis sums it up: “2025 was the year of AI speed. 2026 will be the year of AI quality.”

Gartner’s prediction puts stakes on the table: over 40% of agentic AI projects will be canceled by the end of 2027, with reliability cited as the primary reason. McKinsey’s State of AI Trust report reveals that only one-third of organizations report mature governance for agentic AI, with an average Responsible AI maturity score of 2.3 out of 5. Technical capabilities are advancing faster than organizational oversight.

Moreover, enterprise data quality adds another dimension to the crisis. 28% of US organizations admit they have “zero confidence” in the data quality feeding their AI systems. The problem compounds: unreliable AI trained on unreliable data, deployed without mature governance.

Vendors are shifting messaging accordingly. “10x developer” promises are giving way to “accelerate with AI, verify with quality tools.” The hype cycle is entering what Gartner calls the “trough of disillusionment.” Therefore, expect verification tools, governance frameworks, and trustworthy AI initiatives to dominate 2026-2027.

Key Takeaways

- The AI trust gap is widening: 84% of developers use AI tools, but only 29% trust them—an 11-point drop from 2024 despite increased adoption.

- A dangerous verification gap exists: 96% distrust AI code quality, yet only 48% verify before committing, creating technical debt in production systems.

- AI code quality is measurably worse: 8.1 million pull requests analyzed show AI generates 1.7x more bugs, with logic errors up 1.75x, security vulnerabilities up 1.57x, and maintainability issues up 1.64x.

- Productivity gains are illusory: Developers feel 20% faster but actually deliver 19% slower end-to-end, spending 24% of their work week verifying AI output—toil swap, not toil reduction.

- The root cause is deterministic vs probabilistic thinking: Developers trained for reproducible results face tools that operate stochastically, and every inconsistency reinforces rational skepticism.

- Industry pivot underway: 2026 shifts from “AI speed” to “AI quality” as Gartner predicts 40% of agentic AI projects will fail by 2027 due to reliability concerns.