The Multimodal Infrastructure Gap

Multimodal AI applications—voice assistants, vision agents, systems combining text with images or audio—are becoming table stakes. However, the infrastructure to serve these models efficiently has lagged behind the models themselves.

OpenAI charges a premium for GPT-4o’s multimodal capabilities. Google’s Gemini 2.5 Pro handles text, images, video, and audio natively, but it’s proprietary with all the vendor lock-in that implies. For developers who need control over their stack or face compliance requirements that forbid external APIs, the only option has been stitching together manual solutions—separate serving systems for text models, diffusion models, and audio processors with handwritten glue code to route data between stages.

That’s a non-trivial engineering problem. Existing serving frameworks like vLLM excel at text-only LLM inference but don’t handle “any-to-any pipelines with multiple interconnected model components,” as the vLLM-Omni research paper puts it. The result is developers either paying API bills that scale poorly or building custom infrastructure that can’t match the throughput and efficiency of purpose-built systems.

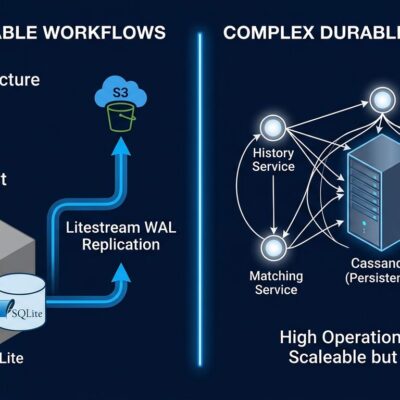

Disaggregated Architecture That Actually Works

vLLM-Omni solves this with a disaggregated backend that treats each modality—text, image, video, audio—as a separate optimization problem. Instead of forcing a monolithic architecture to handle all inputs and outputs, it decomposes multimodal pipelines into interconnected stages represented as a directed graph. Each stage runs independently with dedicated LLM or diffusion engines, supporting per-stage request batching and flexible GPU allocation.

This isn’t theoretical. The architecture delivers up to 91.4% reduction in job completion time compared to baseline approaches, according to benchmarks in the research paper. Moreover, the unified inter-stage connector adds negligible overhead relative to overall inference latency, which matters when you’re serving hundreds of concurrent requests.

vLLM-Omni inherits the performance optimizations that made vLLM dominant for text inference: PagedAttention for 4x memory efficiency, continuous batching for high throughput, and optimized CUDA kernels. Furthermore, it extends these techniques to diffusion transformers and other non-autoregressive architectures, which previous serving systems struggled with.

The practical benefit is straightforward: you get vLLM’s proven 24x throughput advantage over naive implementations, but for multimodal workloads instead of just text.

What You Can Actually Build with vLLM-Omni

vLLM-Omni supports the cutting-edge omni-modality models that justify all this infrastructure work. Qwen3-Omni, the flagship example, processes 119 text languages, 19 speech input languages, and 10 speech output languages. It achieves state-of-the-art results on 22 of 36 audio and video benchmarks, with ASR and audio understanding performance comparable to Gemini 2.5 Pro. Additionally, the model can process audio recordings up to 40 minutes long, making it viable for real-world transcription and spoken-language understanding tasks.

Beyond Qwen3-Omni, the framework supports Qwen3-TTS for text-to-speech, Bagel and MiMo-Audio for audio processing, GLM-Image for image generation, and various diffusion models for image and video synthesis. This isn’t a toy research project—it’s production infrastructure for the models developers actually want to deploy.

Use cases are exactly what you’d expect: self-hosted ChatGPT-like systems with voice and vision capabilities, enterprise multimodal chatbots that keep data in-house, production AI agents that combine text generation with image analysis or audio synthesis. For companies serving high-volume multimodal inference, the cost savings are substantial. Stripe’s vLLM migration achieved 73% inference cost reduction with 50 million daily API calls running on one-third the GPU fleet.

Production Readiness Matters

Version 0.16.0, released in February 2026, added the infrastructure features that separate research code from deployable systems. There’s a Kubernetes Helm Chart for orchestration, official Docker images versioned as vllm/vllm-omni:v0.16.0, a stage-based deployment CLI for complex multimodal pipelines, and RDMA connector support for high-performance interconnect scenarios.

Cross-platform compatibility covers NVIDIA GPUs via CUDA, AMD hardware through ROCm, and Intel NPU and XPU backends. The entrypoint was refactored for cleaner startup flow, and the Model Pipeline Configuration System makes it straightforward to define custom multimodal workflows.

This production focus reflects vLLM’s battle-tested foundations. The parent project has 40,000 GitHub stars and contributions from 2,000 developers. Inferact, the commercial entity built around vLLM, raised $150 million at an $800 million valuation in January 2026, signaling enterprise confidence in the technology.

When vLLM-Omni Makes Sense

Use vLLM-Omni when multimodal API costs are prohibitive, when compliance requires self-hosting, or when you need the flexibility to fine-tune and customize models that proprietary providers won’t offer. If you’re serving high-volume multimodal inference—thousands of requests per day with voice, vision, or both—the cost equation tilts heavily toward self-hosting. Stripe’s 73% cost reduction is a real-world data point, not a marketing claim.

Conversely, skip vLLM-Omni for prototyping or low-volume applications where OpenAI or Anthropic APIs are faster to integrate and the monthly bill stays reasonable. Self-hosting requires infrastructure expertise: Kubernetes knowledge, GPU management, monitoring and observability tooling. If you don’t have a team that can handle that operational burden, paying for managed APIs is the pragmatic choice.

For developers between those extremes, the decision comes down to control versus convenience. vLLM-Omni gives you full control over your inference stack with no vendor lock-in, but you trade simplicity for flexibility. Proprietary APIs abstract away the complexity but lock you into pricing and terms you can’t negotiate.

What This Enables

vLLM-Omni isn’t just about cost savings. It’s infrastructure that makes sophisticated multimodal AI accessible to teams that can’t afford—or don’t want—to depend on proprietary providers. Open-source frameworks with permissive licenses reduce barriers to deployment and put competitive pressure on API vendors to justify their pricing.

The broader trend is clear: open-source AI infrastructure is maturing fast enough to challenge proprietary solutions on performance, not just cost. vLLM-Omni extends that trend from text-only LLM serving to the full spectrum of multimodal workloads. For developers building production systems that combine voice, vision, and text, that’s a meaningful shift.

Get started at the official documentation or explore the supported models in the GitHub repository. The framework is production-ready now, not a future promise.