NVIDIA announced Nemotron 3 Super at GTC 2026 on March 11, the first AI model designed from the ground up for multi-agent systems. The 120-billion-parameter model activates only 12 billion parameters per inference—a 10x efficiency gain that makes running autonomous agents 24/7 economically viable. It already outperforms GPT-5.4 and Claude 4.6 Opus on agentic benchmarks despite being fully open-source, signaling a shift toward specialized models optimized for production agent deployments rather than general-purpose chat.

The 10x Efficiency Breakthrough

Nemotron 3 Super uses a Latent Mixture-of-Experts architecture that activates only a subset of its parameters for each inference request. While the model contains 120 billion total parameters, only 12 billion are active during any single forward pass—activating four expert specialists for the computational cost of one. This matters because multi-agent systems run continuously, not on-demand like chatbots. Lower active parameters translate directly to reduced inference costs and latency.

NVIDIA claims 5x higher throughput compared to the previous Nemotron model, 2.2x higher throughput than gpt-oss-120B, and 7.5x better than Qwen3.5-122B in high-volume scenarios. The model also trains natively in NVFP4 precision—a 4-bit floating-point format optimized for Blackwell GPUs—which reduces memory footprint by 3.5x compared to FP16 and delivers 4x faster inference on B200 chips versus FP8 on H100. For developers deploying complex agent workflows, this means the difference between prohibitive costs and production viability.

Built for Agents, Not Adapted From Chat

Unlike GPT-5.4 or Claude 4.6, which are general-purpose models adapted for agentic tasks, Nemotron 3 Super was engineered specifically for multi-agent systems. Its hybrid Mamba-Transformer architecture reflects this focus: Mamba-2 layers handle the bulk of sequence processing with linear-time complexity, making the model’s 1 million token context window practical rather than theoretical. Transformer layers activate only for attention-critical steps. The result is a model that can load an entire codebase into context and maintain full workflow state across multi-step agent operations without goal drift.

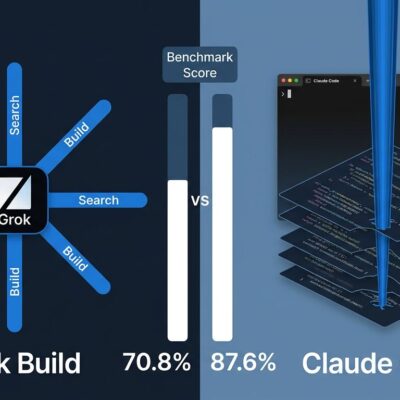

Performance validates this approach. On PinchBench—a benchmark measuring LLM effectiveness as the “brain” of an OpenClaw agent—Nemotron 3 Super scores 84.7%, ahead of Claude 4.6 Opus (80.8%) and GPT-5.4 (80.5%). This isn’t just a marginal improvement. It’s evidence that purpose-built agentic models outperform general-purpose alternatives when evaluated on real agent tasks: tool use, long-context reasoning, state management, and multi-step planning.

Open Weights Accelerate Enterprise Adoption

Nemotron 3 Super ships fully open under NVIDIA’s permissive license, with weights, datasets, and training recipes available on Hugging Face. For enterprises, this eliminates vendor lock-in. Developers can deploy on their own infrastructure, customize the model for specific agent frameworks like LangChain or CrewAI, and avoid usage-based pricing or API rate limits. When data stays in-house and inference runs locally, compliance and cost concerns shrink.

Early adoption confirms the model’s production readiness. Software development agent companies like CodeRabbit, Factory, and Greptile are already integrating Nemotron 3 Super, citing higher accuracy at lower cost compared to proprietary alternatives. CodeRabbit, for example, uses the 1M-token context to perform end-to-end code generation by loading entire repositories into memory—something that would require chunking and retrieval with shorter-context models. This is not a research experiment. It’s a deployment pattern emerging in real products.

What This Means for Developers

The release of Nemotron 3 Super marks a turning point: the assumption that general-purpose frontier models are optimal for every use case no longer holds. Agentic AI workflows have different requirements than chat. They need efficiency for continuous operation, long context for state retention, and architectural optimizations for tool use and planning. A 120-billion-parameter model that behaves like a 12-billion-parameter model at inference time is not a compromise—it’s a feature.

Developers building multi-agent systems should evaluate Nemotron 3 Super against their current models. If agent operating costs or latency are pain points, the 10x efficiency gain warrants testing. If vendor APIs create deployment friction, open weights remove that barrier. And if agentic performance matters more than general chat quality, the PinchBench results speak clearly. NVIDIA built this model for a specific job, and early evidence suggests it does that job better than models designed to do everything.