AI coding tools now write 41% of all code and save developers 30-60% of time on routine tasks. Organizations are celebrating unprecedented productivity gains. But Google’s 2024 DORA report reveals a troubling paradox: while code output soared, delivery stability declined 7.2%. The math doesn’t add up—and developers are paying the price in verification debt, doubled code churn, and measurement frameworks that track the wrong things.

Moreover, 84% of developers use AI tools according to Stack Overflow’s 2025 survey, yet 96% don’t trust the output. This creates what AWS CTO Werner Vogels calls “verification debt”—generating code faster than teams can validate it. As AI approaches 65% of committed code by 2027, organizations need holistic measurement frameworks or risk accumulating technical debt disguised as productivity.

Speed Up, Stability Down

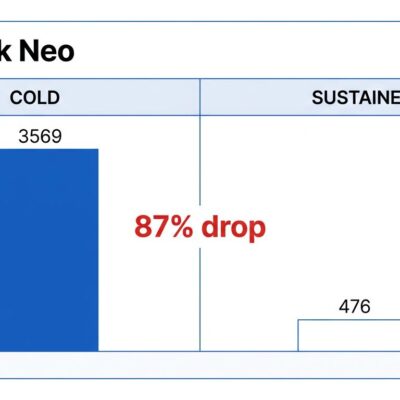

The numbers tell a story organizations don’t want to hear. While AI tools deliver 30-60% time savings and produce 41% of code, Google’s 2024 DORA report found delivery stability decreased 7.2% and throughput declined 1.5%. Code churn—the amount rewritten or deleted within two weeks—has doubled. The promise of unprecedented productivity is colliding with the reality of unprecedented instability.

Furthermore, the hidden costs make the productivity gains even more questionable. Research shows first-year AI costs run 12% higher when accounting for the complete picture: 9% code review overhead, 1.7x testing burden from increased defects, and 2x code churn requiring constant rewrites. Organizations celebrating 2x code output are missing that 2x code churn means zero net gain.

The disconnect is simple: organizations measure speed (code output) but ignore stability (delivery quality). When teams optimize for individual velocity without tracking team-level stability, they cargo-cult their way to fragile systems wrapped in productivity theater.

The Verification Debt Crisis

Verification debt explains why the AI productivity paradox exists. AWS CTO Werner Vogels coined the term to describe the growing gap between how fast we generate code and how fast we can validate it. The Sonar 2026 State of Code Survey quantifies the problem: 96% of developers believe AI-generated code isn’t functionally correct, yet only 48% always verify before committing.

However, the behavior is irrational but understandable. 72% who tried AI use it daily despite trust issues. Additionally, 38% report that reviewing AI code requires more effort than reviewing human code. As AI writes 42% of code today and heads toward 65% by 2027, the verification bottleneck is replacing the development bottleneck. Teams generate code faster than they can check it, accumulating risk with every commit.

The consequences are measurable. 88% of developers cite negative impacts from AI tools: unreliable code that looks correct but isn’t (53%), and unnecessary duplicative code (40%). Security research shows 45% of AI-generated code contains vulnerabilities. Yes, 93% also report positive effects—improved documentation (57%) and better test coverage (53%)—but better docs on unstable code is just polished technical debt.

Measuring Developer Productivity: The Wrong Metrics

Traditional DORA metrics—deployment frequency, lead time, change failure rate, and mean time to recovery—measure speed but not quality balance. In the AI era, these metrics are insufficient. Organizations optimizing for deployment frequency while code churn doubles are measuring activity, not value. The result is metric gaming at team level: higher output scores, lower stability, same leadership celebrating “AI productivity.”

Nevertheless, new frameworks exist to solve this. DX Core 4, launched in January 2026, encapsulates DORA, SPACE, and DevEx into four dimensions: Speed, Effectiveness, Quality, and Impact. Built on data from 800+ engineering organizations and 40,000+ developers, it codifies what should be obvious: every speed metric needs a quality counterbalance. Each one-point improvement in developer experience saves 13 minutes per developer per week—sustainable gains, not temporary speed boosts.

Similarly, the SPACE framework (Satisfaction, Performance, Activity, Communication, Efficiency) provides another holistic approach. Developed by Nicole Forsgren and Margaret-Anne Storey at GitHub and Microsoft Research, it prevents activity metric gaming by measuring across five dimensions. Teams using all five improve productivity 20-30% compared to those measuring activity alone. The philosophy shift is fundamental: from “how much code was written” to “how much value was delivered sustainably.”

The Framework Adoption Gap

Here’s the problem: these frameworks exist but only 11% of organizations have holistic metrics in production. Most still measure what’s easy (commits, lines of code, tickets closed) instead of what matters (value delivered, stability maintained, quality preserved). The gap between framework availability and framework adoption means organizations have the tools to fix the measurement problem but aren’t using them.

Indeed, developer trust statistics illustrate the urgency. Stack Overflow’s 2025 survey shows 84% adoption but trust declining from 31% distrust in 2024 to 46% in 2025. JetBrains’ 2025 Developer Ecosystem report confirms the pattern: 85% adoption with rising quality concerns. Developers use tools they don’t trust, generating code faster than they can verify, creating systemic risk that compounds monthly.

The code quality impact is quantifiable. Code duplication is up 4x with AI. Copy-pasted code rose 48% in AI-assisted development. Short-term churn signals less maintainable design. These aren’t theoretical concerns—they’re measurable degradation in codebase health, masked by activity metrics that show “increased productivity.”

The Reality Check: Balancing AI Productivity

AI productivity is real but incomplete. Speed without stability is technical debt in disguise. The emperor has no clothes: 2x output plus 2x churn equals zero net gain. Organizations cargo-culting AI without verification infrastructure are accumulating risk faster than they’re shipping features.

Therefore, the solution isn’t rejecting AI tools—it’s adopting measurement frameworks that balance speed with quality. DX Core 4 and SPACE provide the blueprints. Verification infrastructure prevents debt accumulation. Holistic metrics reveal true productivity, not just activity theater.

96% of developers don’t trust AI code but only 48% verify it. This irrational behavior is unsustainable. As AI approaches 65% of code by 2027, the industry faces a choice: adopt frameworks that measure what matters, or watch delivery stability decline further while celebrating productivity gains that don’t exist.

The frameworks are here. The data is clear. Ultimately, the question isn’t “what to measure”—it’s “will you measure what matters?”