Data published today shows 90% of Claude-generated code goes to GitHub repositories with fewer than 2 stars. Out of 50 billion lines of AI-generated code created in just 13 months, only 5 billion landed in repositories with any meaningful visibility. The stat hit Hacker News this morning with 137 comments and immediate controversy—some calling it proof that AI code is garbage, others arguing stars don’t measure quality.

However, the debate misses the point. The real story isn’t the 90% statistic. It’s that developers think AI makes them faster when controlled studies show they’re measurably slower—and they can’t tell the difference.

The Perception Gap Is the Real Crisis

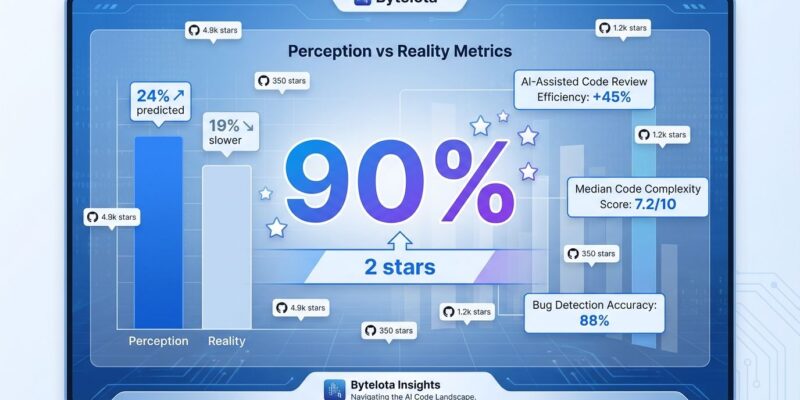

A randomized controlled trial by METR tested experienced open-source developers working on their own repositories. Before the study, developers predicted AI would make them 24% faster. The result? They were actually 19% slower with AI tools than without them. More troubling: after experiencing this slowdown firsthand, participants still believed AI had sped them up by 20%.

That’s a 44-percentage-point gap between perception and reality. Moreover, it explains everything about the current AI coding landscape. Developers feel productive because AI generates polished output quickly. But workflow disruption—evaluating suggestions, correcting “almost-right” code, debugging subtle errors—eats the gains. The code looks good, reviews pass, tests pass. The problems show up later.

This is the Dunning-Kruger effect at industrial scale. When tools give you confidence and polished output without requiring deep understanding, you can’t tell you’re producing worse outcomes. You can’t fix what you can’t see.

Quality Metrics Confirm the Decline

GitClear analyzed 211 million lines of code from repositories at Google, Microsoft, and Meta between 2020 and 2024. The timing coincides with AI coding tool adoption, and the quality trends are stark.

Refactored code—the healthy maintenance that keeps codebases sustainable—dropped from 25% of changed lines in 2021 to less than 10% in 2024. Meanwhile, copy-pasted code rose from 8.3% to 12.3%. For the first time in 2024, copy-paste patterns surpassed refactoring. Furthermore, code churn—premature commits requiring revision within two weeks—jumped from 3.1% to 5.7%.

These aren’t subjective assessments. Controlled studies show AI-generated code contains 1.7 times more bugs than human-written code. Maintainability errors appear 1.64 times more often. By year two, maintenance costs for AI-generated codebases run four times higher than traditional development.

AI tools optimize for speed, not sustainability. They generate working code, but not maintainable architecture. The difference matters when you’re still debugging it eighteen months later.

The 90% Stat Needs Context

Here’s the uncomfortable context: 98% of all GitHub repositories—human or AI—have fewer than 2 stars. GitHub hosts over 420 million public repositories. Most code has no audience, no users, no stars. Personal tools, abandoned experiments, private prototypes made public. The Hacker News critics are right—stars don’t measure quality.

But they’re wrong that this makes the 90% statistic meaningless. The issue isn’t the percentage. It’s the volume.

Claude has generated 50 billion lines of code in 13 months. That means 45 billion lines went to repositories nobody uses, maintains, or values at any scale. Even if that matches the human baseline percentage-wise, the sheer scale creates new problems. Consequently, that’s not just experimentation or personal tools—that’s an unprecedented amount of potentially unmaintainable code entering the ecosystem at velocity.

And developers creating it can’t tell whether it’s good or bad. They feel faster. The metrics say otherwise.

The Technical Debt Compounds

Seventy-five percent of technology leaders are projected to face moderate or severe technical debt problems by 2026 because of AI-accelerated coding practices. Organizations that rushed into AI-assisted development without governance now face what researchers call “crisis-level accumulated technical debt” in 2026-2027.

The economics tell the story. First-year costs with AI coding tools run 12% higher than traditional development despite vendor claims of 50% faster delivery. The overhead comes from code review burden, testing gaps, and increased churn. Additionally, by year two, as technical debt compounds, costs hit four times traditional levels.

This is how compound interest works against you. Short-term wins—ship fast, move on—create long-term maintenance nightmares. AI encourages immediate output over sustainable architecture. Code that works but isn’t well-structured. Future teams inherit the mess.

Use AI Strategically, Not Reflexively

The problem isn’t AI coding tools. It’s using them reflexively instead of strategically. There are good use cases: boilerplate and scaffolding, exploring unfamiliar APIs, rapid prototyping, handling well-understood patterns. These add value with limited risk.

Bad use cases: complex business logic, security-critical code, architectural decisions, anything you don’t understand well enough to review critically. These multiply risk.

The key difference is whether you can validate correctness without the AI’s help. If you’re relying on AI to tell you whether AI-generated code is correct, you’re accumulating debt.

Measure outcomes, not output. Track your own metrics: bug rates, code churn, review cycle time, production incidents. Compare periods with and without heavy AI use. Most developers haven’t done this analysis. They assume AI helps because it feels productive.

The METR study proved that feeling is wrong for most developers. The GitClear data shows quality declining industry-wide. The 90% statistic—context included—shows unprecedented volumes of low-visibility code being generated.

The developers who succeed with AI won’t be those who generate the most code. They’ll be those who generate the most value—which sometimes means writing less, refactoring more, and knowing when to trust your tools and when to trust your judgment.